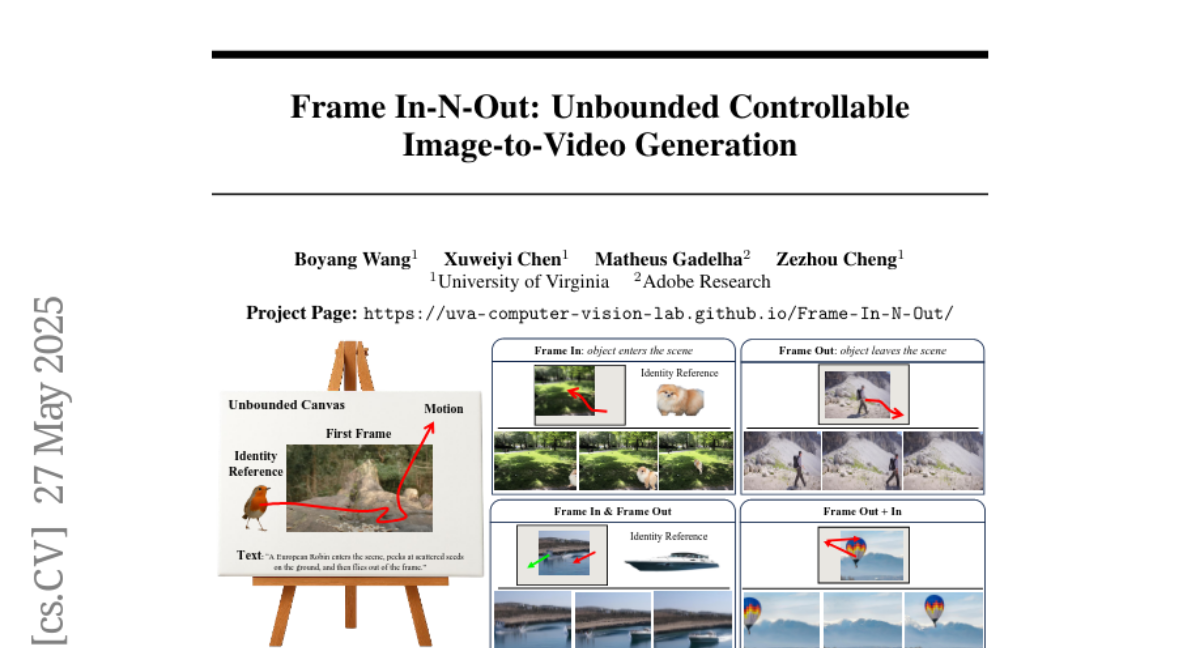

Frame In-N-Out: Unbounded Controllable Image-to-Video Generation

Boyang Wang, Xuweiyi Chen, Matheus Gadelha, Zezhou Cheng

2025-05-28

Summary

This paper talks about a new method called Frame In-N-Out that helps AI turn images into videos where you can control what happens, keep everything looking smooth, and make sure the details stay sharp.

What's the problem?

The problem is that when AI tries to make videos from images, it often struggles to let users control the video, keep the action flowing smoothly from one frame to the next, and make sure all the small details look good throughout the video.

What's the solution?

The researchers created a special type of AI called a Diffusion Transformer that uses a technique called Frame In and Frame Out. This lets the model generate videos that are easy to control, look consistent over time, and have high-quality details.

Why it matters?

This matters because it makes it possible to create better and more creative videos from just a single image, which is useful for animation, movies, and any project where you want to turn pictures into videos that look great and do exactly what you want.

Abstract

An efficient Diffusion Transformer architecture addresses controllability, temporal coherence, and detail synthesis in video generation using the Frame In and Frame Out technique.