Framer: Interactive Frame Interpolation

Wen Wang, Qiuyu Wang, Kecheng Zheng, Hao Ouyang, Zhekai Chen, Biao Gong, Hao Chen, Yujun Shen, Chunhua Shen

2024-10-25

Summary

This paper presents Framer, a new interactive tool that helps users create smooth transitions between two images by customizing how the images blend together.

What's the problem?

Creating smooth transitions between images can be challenging because there are many ways to transform one image into another. This can lead to confusing results, especially when the objects in the images change shape or style. Users often have little control over how these transformations happen, which can result in unsatisfactory outcomes.

What's the solution?

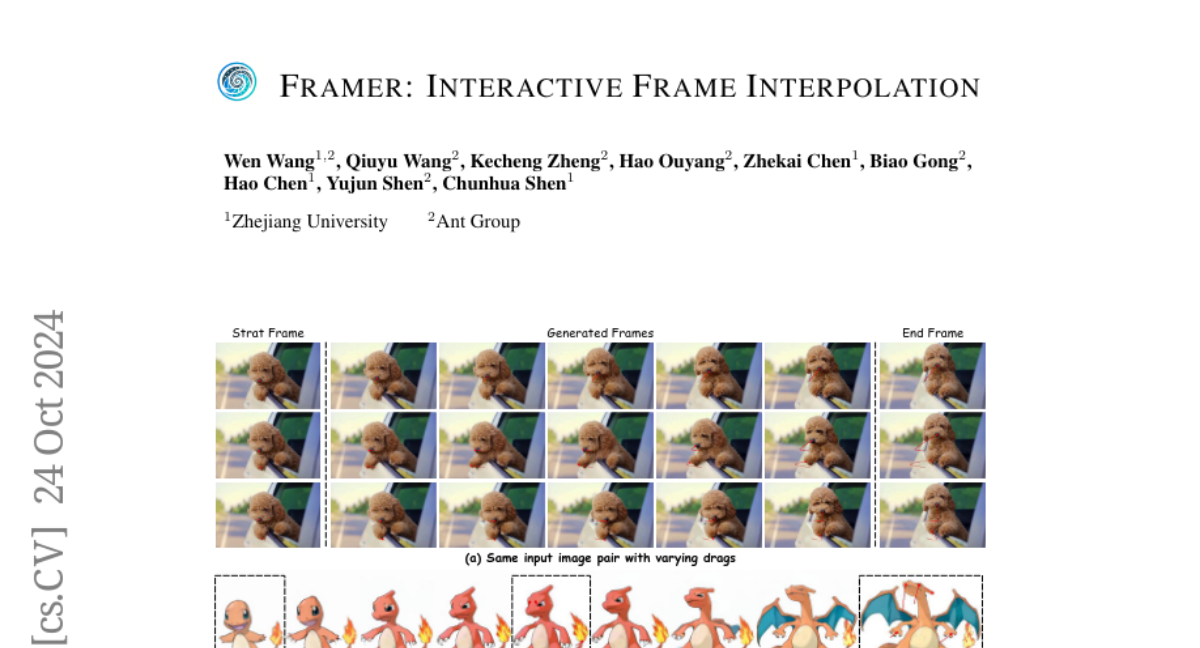

Framer allows users to select key points on the images and customize the paths these points take during the transition. This gives users more control over how specific parts of the images move and change. Additionally, Framer has an 'autopilot' mode that automatically estimates these key points and refines their paths, making it easier for users to achieve good results without needing to do all the work themselves.

Why it matters?

This research is important because it enhances creative tools for artists and designers by providing a way to produce high-quality animations and transitions between images. By allowing for user interaction and customization, Framer makes it easier to create visually appealing results in various applications like video editing, animation, and graphic design.

Abstract

We propose Framer for interactive frame interpolation, which targets producing smoothly transitioning frames between two images as per user creativity. Concretely, besides taking the start and end frames as inputs, our approach supports customizing the transition process by tailoring the trajectory of some selected keypoints. Such a design enjoys two clear benefits. First, incorporating human interaction mitigates the issue arising from numerous possibilities of transforming one image to another, and in turn enables finer control of local motions. Second, as the most basic form of interaction, keypoints help establish the correspondence across frames, enhancing the model to handle challenging cases (e.g., objects on the start and end frames are of different shapes and styles). It is noteworthy that our system also offers an "autopilot" mode, where we introduce a module to estimate the keypoints and refine the trajectory automatically, to simplify the usage in practice. Extensive experimental results demonstrate the appealing performance of Framer on various applications, such as image morphing, time-lapse video generation, cartoon interpolation, etc. The code, the model, and the interface will be released to facilitate further research.