Free-form language-based robotic reasoning and grasping

Runyu Jiao, Alice Fasoli, Francesco Giuliari, Matteo Bortolon, Sergio Povoli, Guofeng Mei, Yiming Wang, Fabio Poiesi

2025-03-18

Summary

This paper explores how to make robots better at grabbing objects from messy piles based on what people tell them to do.

What's the problem?

It's hard for robots to understand both what people want them to grab and how objects are arranged in a messy pile. They need to understand language and how objects relate to each other in space.

What's the solution?

The researchers created a new method called FreeGrasp that uses advanced AI models (like GPT-4o) to understand instructions and object arrangements. It identifies objects as keypoints and uses these keypoints to help the AI understand the scene. This allows the robot to figure out if it can directly grab the requested object or if it needs to move other objects first.

Why it matters?

This work matters because it brings robots closer to being able to perform complex tasks in unstructured environments, based on simple human instructions. This could have many applications, like helping people with household chores or working in warehouses.

Abstract

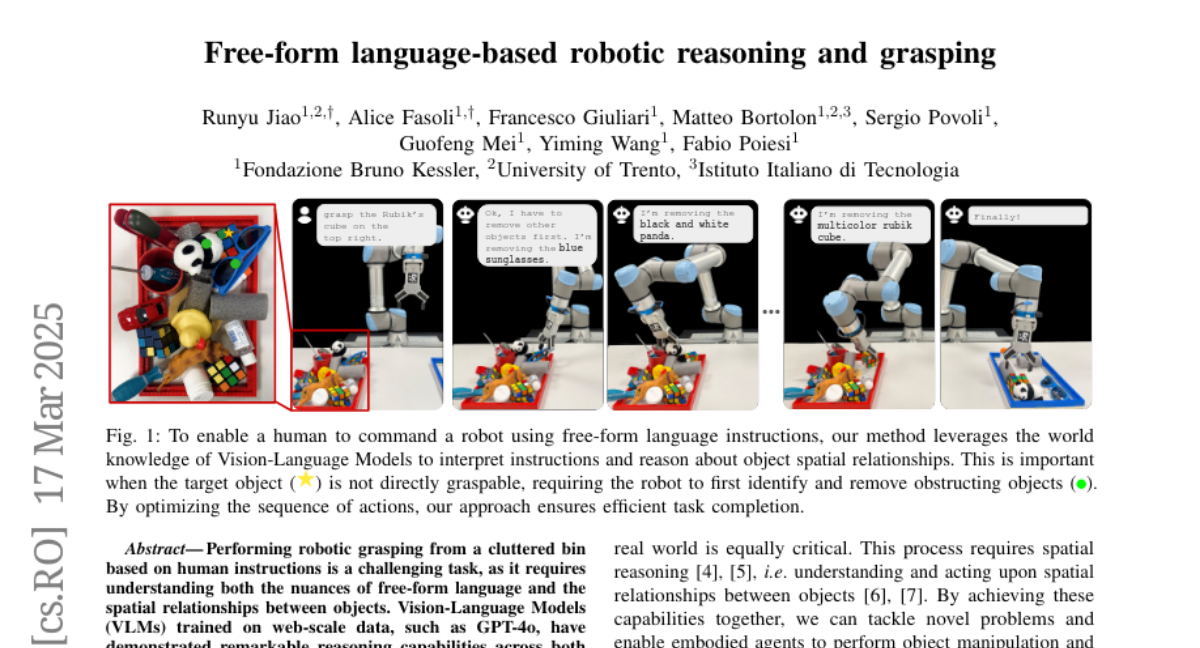

Performing robotic grasping from a cluttered bin based on human instructions is a challenging task, as it requires understanding both the nuances of free-form language and the spatial relationships between objects. Vision-Language Models (VLMs) trained on web-scale data, such as GPT-4o, have demonstrated remarkable reasoning capabilities across both text and images. But can they truly be used for this task in a zero-shot setting? And what are their limitations? In this paper, we explore these research questions via the free-form language-based robotic grasping task, and propose a novel method, FreeGrasp, leveraging the pre-trained VLMs' world knowledge to reason about human instructions and object spatial arrangements. Our method detects all objects as keypoints and uses these keypoints to annotate marks on images, aiming to facilitate GPT-4o's zero-shot spatial reasoning. This allows our method to determine whether a requested object is directly graspable or if other objects must be grasped and removed first. Since no existing dataset is specifically designed for this task, we introduce a synthetic dataset FreeGraspData by extending the MetaGraspNetV2 dataset with human-annotated instructions and ground-truth grasping sequences. We conduct extensive analyses with both FreeGraspData and real-world validation with a gripper-equipped robotic arm, demonstrating state-of-the-art performance in grasp reasoning and execution. Project website: https://tev-fbk.github.io/FreeGrasp/.