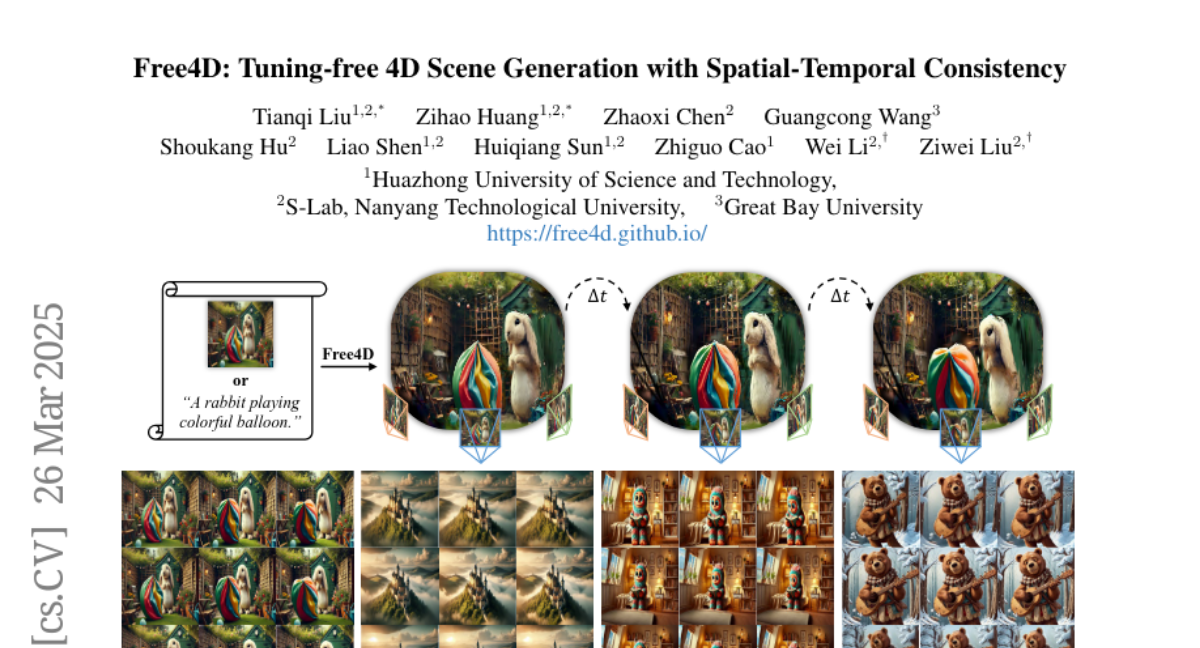

Free4D: Tuning-free 4D Scene Generation with Spatial-Temporal Consistency

Tianqi Liu, Zihao Huang, Zhaoxi Chen, Guangcong Wang, Shoukang Hu, Liao Shen, Huiqiang Sun, Zhiguo Cao, Wei Li, Ziwei Liu

2025-03-31

Summary

This paper is about a new way to create 4D scenes (3D scenes that change over time) from just a single image, without needing a lot of training data.

What's the problem?

Creating 4D scenes is hard because it usually requires expensive training on lots of videos, and it's difficult to make the scenes consistent over time.

What's the solution?

The researchers developed a system that uses pre-trained AI models to create a consistent 4D scene from a single image by animating it and then refining it to make it look realistic.

Why it matters?

This work matters because it can make it easier to create realistic and dynamic 3D environments from simple images.

Abstract

We present Free4D, a novel tuning-free framework for 4D scene generation from a single image. Existing methods either focus on object-level generation, making scene-level generation infeasible, or rely on large-scale multi-view video datasets for expensive training, with limited generalization ability due to the scarcity of 4D scene data. In contrast, our key insight is to distill pre-trained foundation models for consistent 4D scene representation, which offers promising advantages such as efficiency and generalizability. 1) To achieve this, we first animate the input image using image-to-video diffusion models followed by 4D geometric structure initialization. 2) To turn this coarse structure into spatial-temporal consistent multiview videos, we design an adaptive guidance mechanism with a point-guided denoising strategy for spatial consistency and a novel latent replacement strategy for temporal coherence. 3) To lift these generated observations into consistent 4D representation, we propose a modulation-based refinement to mitigate inconsistencies while fully leveraging the generated information. The resulting 4D representation enables real-time, controllable rendering, marking a significant advancement in single-image-based 4D scene generation.