FreshStack: Building Realistic Benchmarks for Evaluating Retrieval on Technical Documents

Nandan Thakur, Jimmy Lin, Sam Havens, Michael Carbin, Omar Khattab, Andrew Drozdov

2025-04-17

Summary

This paper talks about FreshStack, a new way to create realistic tests for checking how well computer systems can find the right information in technical documents, using real questions and answers from online communities.

What's the problem?

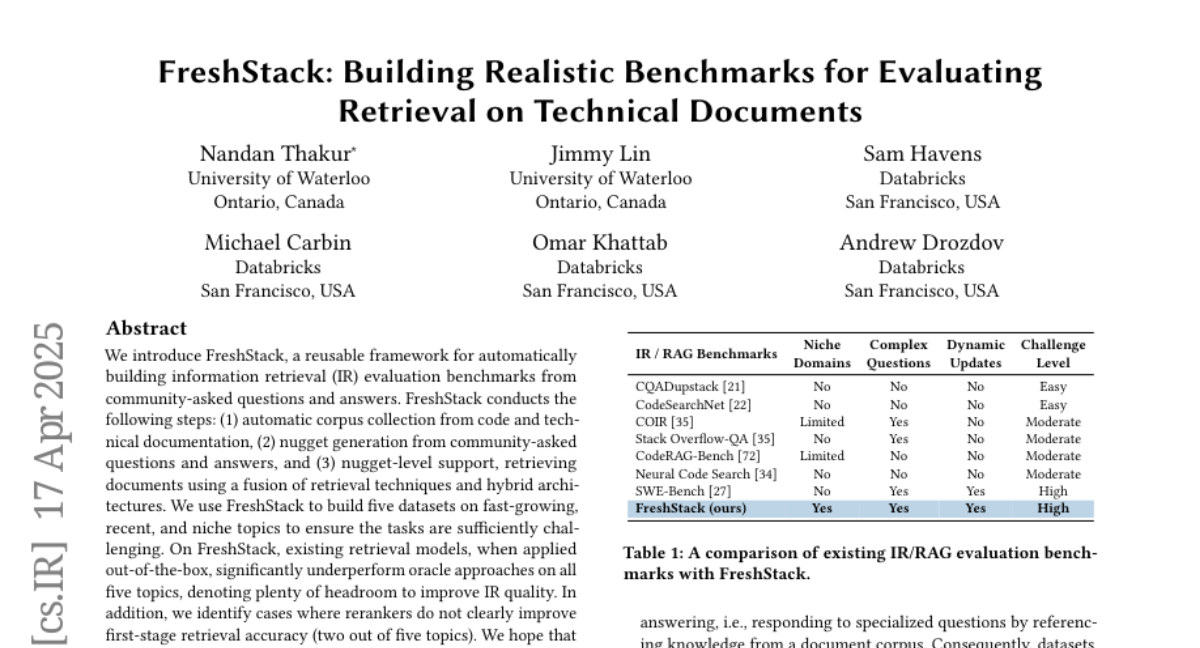

The problem is that most existing benchmarks for testing information retrieval systems aren't very realistic or up-to-date, so they don't really show how well these systems work in real-world situations, especially when it comes to technical topics like programming or engineering.

What's the solution?

The researchers developed the FreshStack framework, which builds new and more realistic benchmarks by collecting actual questions and answers from community forums. This helps them see where current retrieval models do well and where they struggle, especially with the techniques used to re-rank search results.

Why it matters?

This matters because it helps developers and researchers create better systems for finding information in technical documents, which is super important for students, engineers, and anyone who needs accurate answers quickly when working on complex problems.

Abstract

FreshStack framework builds IR evaluation benchmarks using community Q&A, demonstrating significant opportunities for improving retrieval models and identifying limitations of reranking techniques.