From Reflection to Perfection: Scaling Inference-Time Optimization for Text-to-Image Diffusion Models via Reflection Tuning

Le Zhuo, Liangbing Zhao, Sayak Paul, Yue Liao, Renrui Zhang, Yi Xin, Peng Gao, Mohamed Elhoseiny, Hongsheng Li

2025-04-23

Summary

This paper talks about a new technique called ReflectionFlow that makes text-to-image diffusion models even better by allowing them to keep improving the image as it is being created, rather than just generating it in one go.

What's the problem?

The problem is that while diffusion models are already good at turning text into images, the first image they create isn't always perfect, and it's hard for the model to fix its own mistakes or make improvements during the process.

What's the solution?

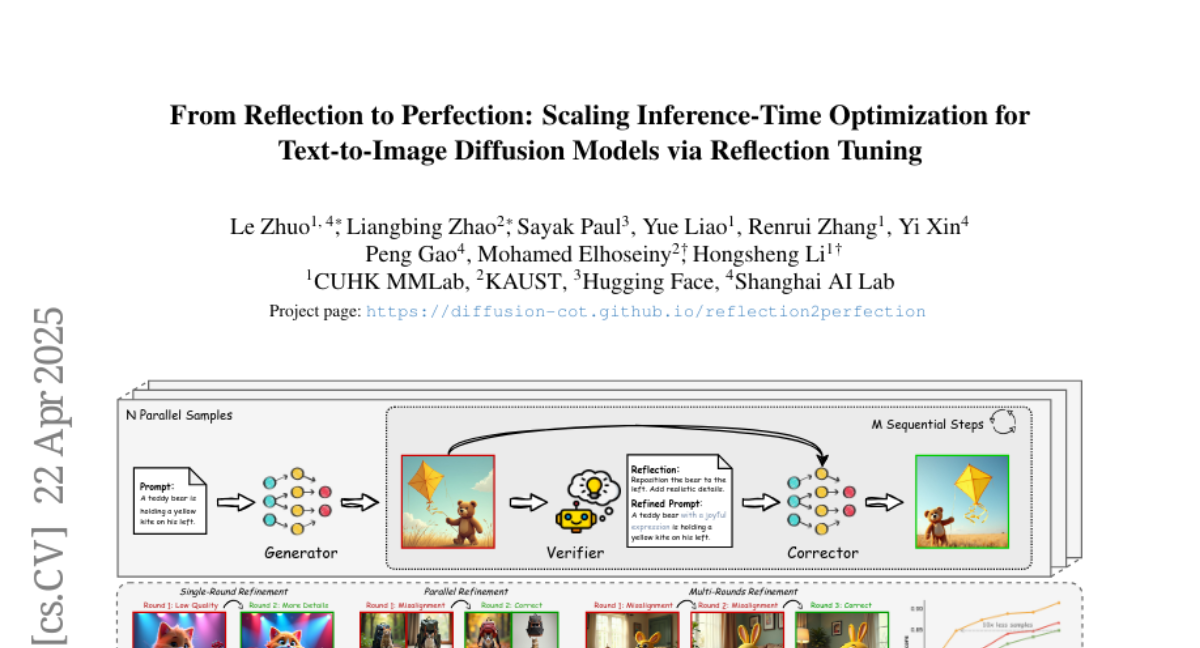

To address this, the researchers developed ReflectionFlow, which lets the model repeatedly reflect on and refine its output during the image generation process. By adjusting how much the model pays attention to noise, the text prompt, and its own previous attempts, the method helps the model produce higher quality and more accurate images.

Why it matters?

This is important because it means AI can create images from text that are not only more detailed and accurate, but also closer to what people actually want, making these tools more useful and reliable for creative and practical applications.

Abstract

ReflectionFlow enhances text-to-image diffusion models by enabling iterative refinement during inference, using noise-level, prompt-level, and reflection-level scaling to improve quality.