From Virtual Games to Real-World Play

Wenqiang Sun, Fangyun Wei, Jinjing Zhao, Xi Chen, Zilong Chen, Hongyang Zhang, Jun Zhang, Yan Lu

2025-06-24

Summary

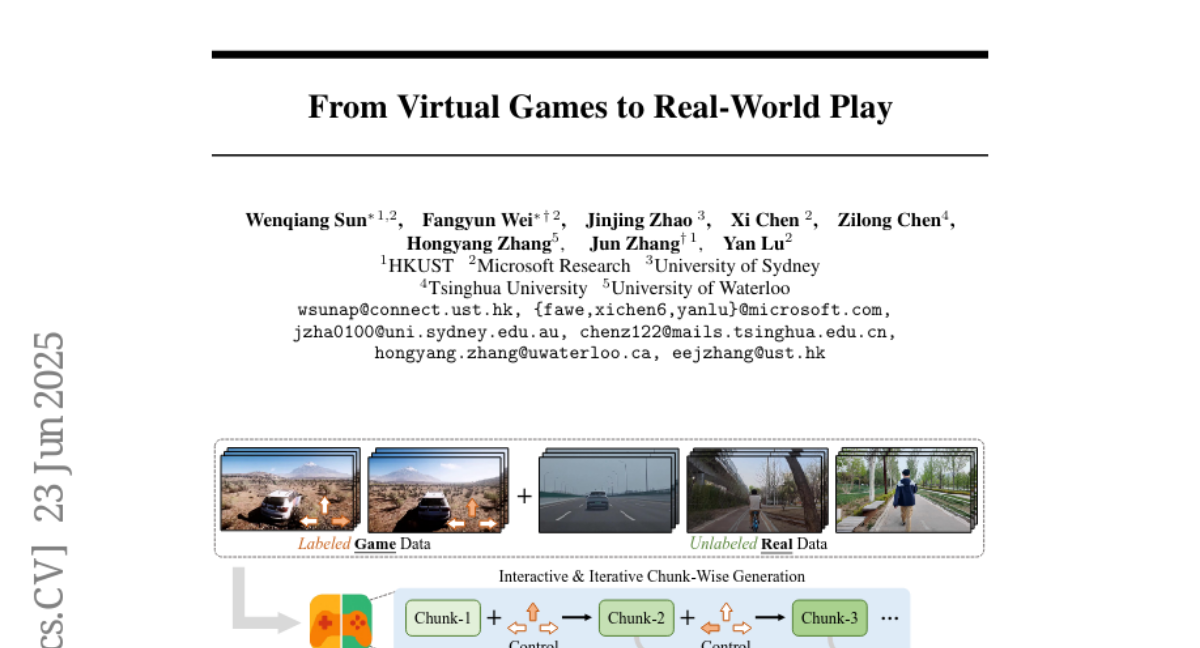

This paper talks about RealPlay, a system that creates realistic and smooth videos from simple user controls, making virtual games feel more like real-world play by accurately showing how things move over time.

What's the problem?

The problem is that generating video sequences that look real and stay consistent over time based on user commands is very challenging, especially when trying to apply it to different real-world objects or characters.

What's the solution?

The researchers developed RealPlay, which uses a step-by-step prediction method that carefully builds each frame to keep the video realistic and coherent, and it works well with many types of real-world entities.

Why it matters?

This matters because it can improve virtual experiences like gaming, training simulations, and digital storytelling by making them more lifelike and responsive to user actions.

Abstract

RealPlay generates photorealistic, temporally consistent video sequences from user control signals through iterative prediction and generalizes to various real-world entities.