FrozenSeg: Harmonizing Frozen Foundation Models for Open-Vocabulary Segmentation

Xi Chen, Haosen Yang, Sheng Jin, Xiatian Zhu, Hongxun Yao

2024-09-06

Summary

This paper talks about FrozenSeg, a new method for segmenting images that allows for recognizing objects across many categories without needing to retrain models for each specific category.

What's the problem?

Segmenting images into different objects is challenging, especially when the objects belong to categories that the model has not seen before. Existing models struggle to create accurate outlines (masks) for these unknown categories, which leads to poor performance in identifying and separating objects in images.

What's the solution?

FrozenSeg combines two types of models: one that understands spatial information (like where things are in an image) and another that understands the meaning of what is in the image. Instead of retraining the entire model, FrozenSeg keeps the foundational models unchanged (or 'frozen') and focuses on optimizing a smaller part of the model that generates masks. This approach allows it to create better mask proposals for unseen categories by using both visual and semantic information effectively.

Why it matters?

This research is important because it improves how we can analyze and understand images with many different objects, especially in real-world situations where we encounter new categories frequently. By enhancing segmentation performance, FrozenSeg can be applied in various fields such as robotics, autonomous vehicles, and medical imaging, where accurately recognizing and separating objects is crucial.

Abstract

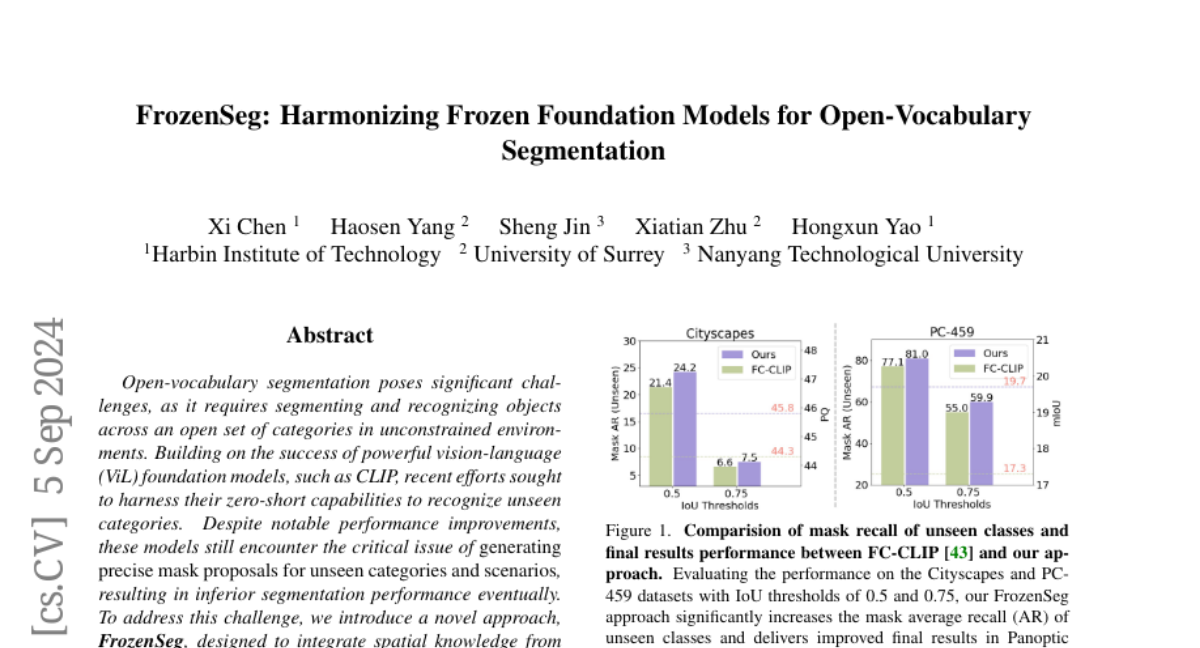

Open-vocabulary segmentation poses significant challenges, as it requires segmenting and recognizing objects across an open set of categories in unconstrained environments. Building on the success of powerful vision-language (ViL) foundation models, such as CLIP, recent efforts sought to harness their zero-short capabilities to recognize unseen categories. Despite notable performance improvements, these models still encounter the critical issue of generating precise mask proposals for unseen categories and scenarios, resulting in inferior segmentation performance eventually. To address this challenge, we introduce a novel approach, FrozenSeg, designed to integrate spatial knowledge from a localization foundation model (e.g., SAM) and semantic knowledge extracted from a ViL model (e.g., CLIP), in a synergistic framework. Taking the ViL model's visual encoder as the feature backbone, we inject the space-aware feature into the learnable queries and CLIP features within the transformer decoder. In addition, we devise a mask proposal ensemble strategy for further improving the recall rate and mask quality. To fully exploit pre-trained knowledge while minimizing training overhead, we freeze both foundation models, focusing optimization efforts solely on a lightweight transformer decoder for mask proposal generation-the performance bottleneck. Extensive experiments demonstrate that FrozenSeg advances state-of-the-art results across various segmentation benchmarks, trained exclusively on COCO panoptic data, and tested in a zero-shot manner. Code is available at https://github.com/chenxi52/FrozenSeg.