FUSION: Fully Integration of Vision-Language Representations for Deep Cross-Modal Understanding

Zheng Liu, Mengjie Liu, Jingzhou Chen, Jingwei Xu, Bin Cui, Conghui He, Wentao Zhang

2025-04-15

Summary

This paper talks about FUSION, a new AI model that can deeply understand both images and text by combining information from both at a very detailed level. FUSION is designed to work better than previous models when it comes to tasks that need both seeing and reading, like answering questions about pictures or describing what’s happening in an image.

What's the problem?

The problem is that most AI models only mix information from images and text in a shallow way, which means they might miss important details or connections between what they see and what they read. This makes it hard for these models to fully understand complex situations that involve both pictures and words.

What's the solution?

The researchers built FUSION to blend vision and language information much more deeply. It looks at images down to the pixel level and connects that with the meaning of the words in a question or description. By doing this, FUSION is able to understand and answer questions about images with much greater accuracy and detail than older models.

Why it matters?

This work matters because it brings us closer to having AI that can truly understand the world the way people do, by connecting what it sees and what it reads in a meaningful way. This could lead to smarter search engines, better digital assistants, and more helpful tools for learning and creativity.

Abstract

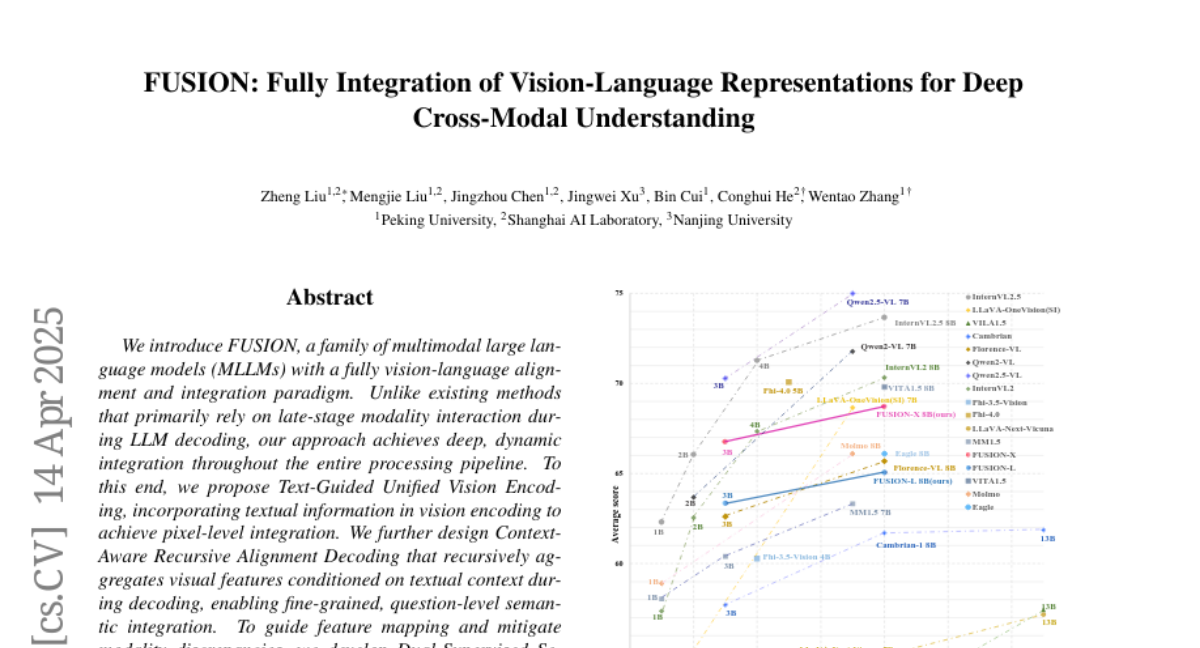

FUSION, a multimodal large language model, integrates vision and language through deep, pixel-level and question-level integration, achieving superior performance compared to existing methods.