FuzzCoder: Byte-level Fuzzing Test via Large Language Model

Liqun Yang, Jian Yang, Chaoren Wei, Guanglin Niu, Ge Zhang, Yunli Wang, Linzheng ChaI, Wanxu Xia, Hongcheng Guo, Shun Zhang, Jiaheng Liu, Yuwei Yin, Junran Peng, Jiaxin Ma, Liang Sun, Zhoujun Li

2024-09-06

Summary

This paper talks about FuzzCoder, a new method that uses large language models to improve the process of finding software vulnerabilities through fuzz testing.

What's the problem?

Fuzzing is a technique used to test software by sending it unexpected or random inputs to find bugs and security issues. However, creating these inputs efficiently is challenging, and traditional methods often rely on random changes to existing valid inputs. This can lead to slow testing and missed vulnerabilities.

What's the solution?

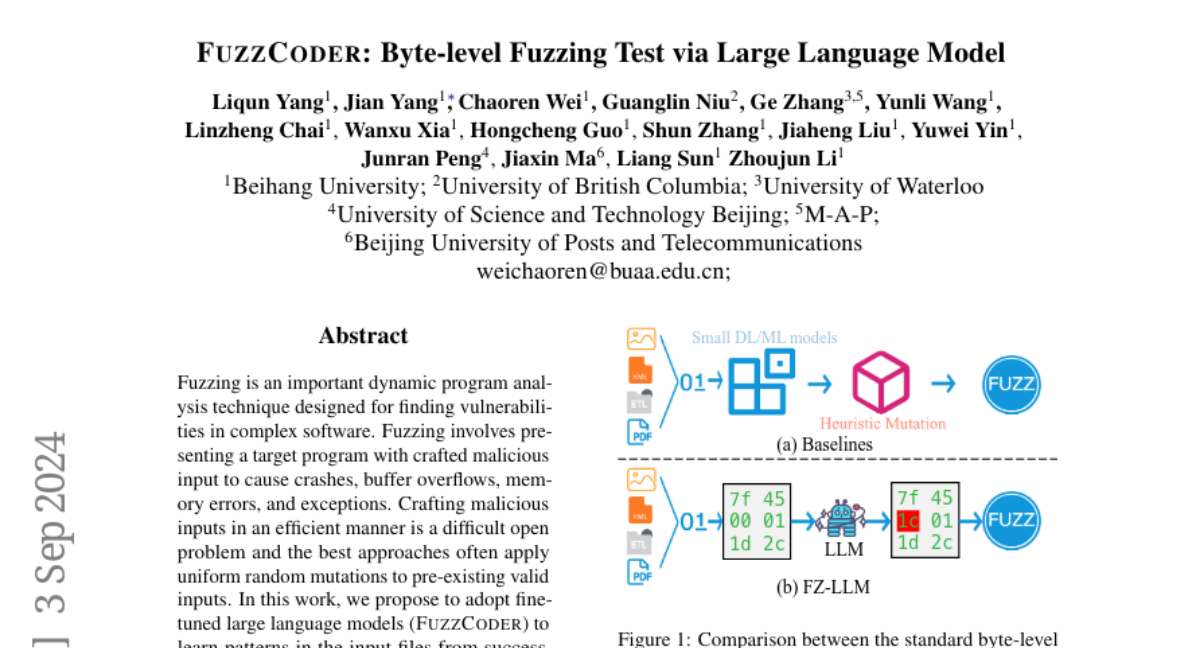

The authors propose FuzzCoder, which uses fine-tuned large language models to learn from past successful attacks and create better input mutations for fuzz testing. By training the model on a special dataset of successful fuzzing attempts, FuzzCoder can intelligently predict where to make changes in the input data to trigger errors in the software being tested. This approach allows for faster and more effective identification of vulnerabilities compared to traditional methods.

Why it matters?

This research is important because it enhances the ability to find security flaws in software more efficiently. By using advanced AI techniques, FuzzCoder can help developers create safer software, ultimately protecting users from potential exploits and improving overall software quality.

Abstract

Fuzzing is an important dynamic program analysis technique designed for finding vulnerabilities in complex software. Fuzzing involves presenting a target program with crafted malicious input to cause crashes, buffer overflows, memory errors, and exceptions. Crafting malicious inputs in an efficient manner is a difficult open problem and the best approaches often apply uniform random mutations to pre-existing valid inputs. In this work, we propose to adopt fine-tuned large language models (FuzzCoder) to learn patterns in the input files from successful attacks to guide future fuzzing explorations. Specifically, we develop a framework to leverage the code LLMs to guide the mutation process of inputs in fuzzing. The mutation process is formulated as the sequence-to-sequence modeling, where LLM receives a sequence of bytes and then outputs the mutated byte sequence. FuzzCoder is fine-tuned on the created instruction dataset (Fuzz-Instruct), where the successful fuzzing history is collected from the heuristic fuzzing tool. FuzzCoder can predict mutation locations and strategies locations in input files to trigger abnormal behaviors of the program. Experimental results show that FuzzCoder based on AFL (American Fuzzy Lop) gain significant improvements in terms of effective proportion of mutation (EPM) and number of crashes (NC) for various input formats including ELF, JPG, MP3, and XML.