GASP: Unifying Geometric and Semantic Self-Supervised Pre-training for Autonomous Driving

William Ljungbergh, Adam Lilja, Adam Tonderski. Arvid Laveno Ling, Carl Lindström, Willem Verbeke, Junsheng Fu, Christoffer Petersson, Lars Hammarstrand, Michael Felsberg

2025-03-21

Summary

This paper explores how to train AI for self-driving cars by teaching it to understand both the geometry (shapes and locations) and semantics (meaning and labels) of its surroundings using a lot of unlabeled data.

What's the problem?

Self-driving cars need to understand their environment really well, but it's hard to get enough labeled data to train them effectively.

What's the solution?

The researchers created a method called GASP that trains the AI to predict what the environment will look like in the future, including where objects are and how the car will move. This helps the AI learn a better understanding of the world without needing labeled data.

Why it matters?

This work matters because it can make self-driving cars safer and more reliable by improving their understanding of the world around them.

Abstract

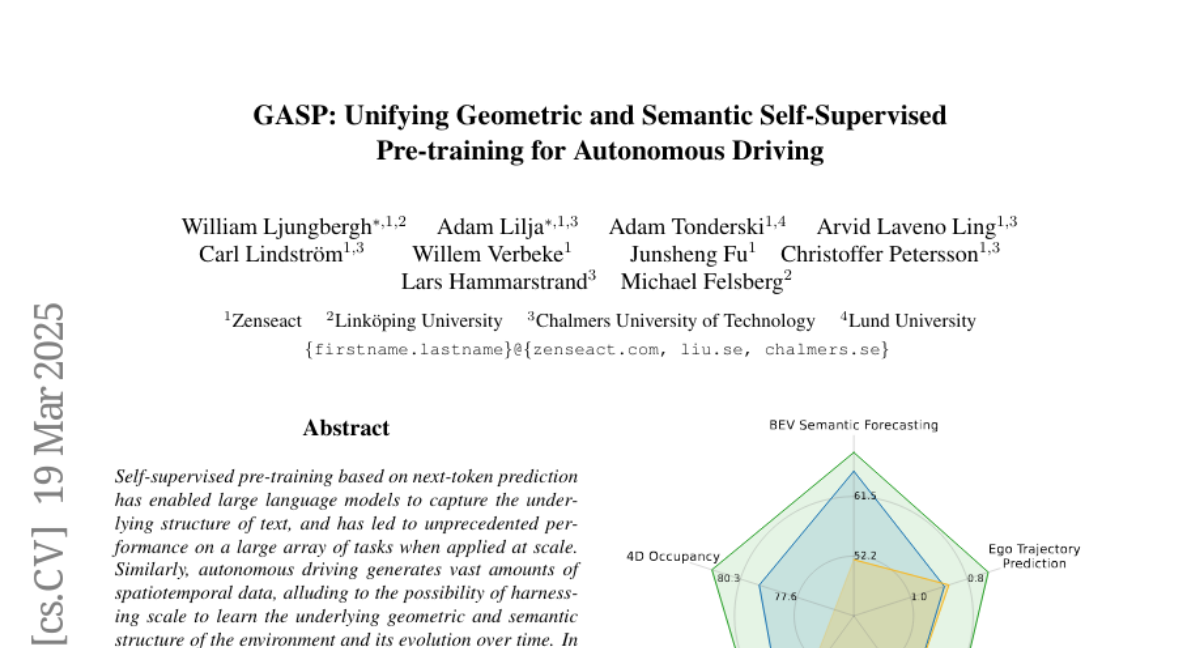

Self-supervised pre-training based on next-token prediction has enabled large language models to capture the underlying structure of text, and has led to unprecedented performance on a large array of tasks when applied at scale. Similarly, autonomous driving generates vast amounts of spatiotemporal data, alluding to the possibility of harnessing scale to learn the underlying geometric and semantic structure of the environment and its evolution over time. In this direction, we propose a geometric and semantic self-supervised pre-training method, GASP, that learns a unified representation by predicting, at any queried future point in spacetime, (1) general occupancy, capturing the evolving structure of the 3D scene; (2) ego occupancy, modeling the ego vehicle path through the environment; and (3) distilled high-level features from a vision foundation model. By modeling geometric and semantic 4D occupancy fields instead of raw sensor measurements, the model learns a structured, generalizable representation of the environment and its evolution through time. We validate GASP on multiple autonomous driving benchmarks, demonstrating significant improvements in semantic occupancy forecasting, online mapping, and ego trajectory prediction. Our results demonstrate that continuous 4D geometric and semantic occupancy prediction provides a scalable and effective pre-training paradigm for autonomous driving. For code and additional visualizations, see \href{https://research.zenseact.com/publications/gasp/.