Gaussian Mixture Flow Matching Models

Hansheng Chen, Kai Zhang, Hao Tan, Zexiang Xu, Fujun Luan, Leonidas Guibas, Gordon Wetzstein, Sai Bi

2025-04-08

Summary

This paper talks about GMFlow, a smarter AI image generator that uses multiple overlapping patterns instead of a single approach to create more realistic pictures with fewer steps and better colors.

What's the problem?

Current AI image tools either take too many steps to make good pictures or end up with weirdly bright colors when using shortcuts to speed up the process.

What's the solution?

GMFlow mixes several smart drawing strategies at once, predicts the best combinations, and uses special math tricks to fix color problems, making it work better with just a few drawing steps.

Why it matters?

This helps create AI art tools that make high-quality images faster, saving energy and time for things like game design or social media content.

Abstract

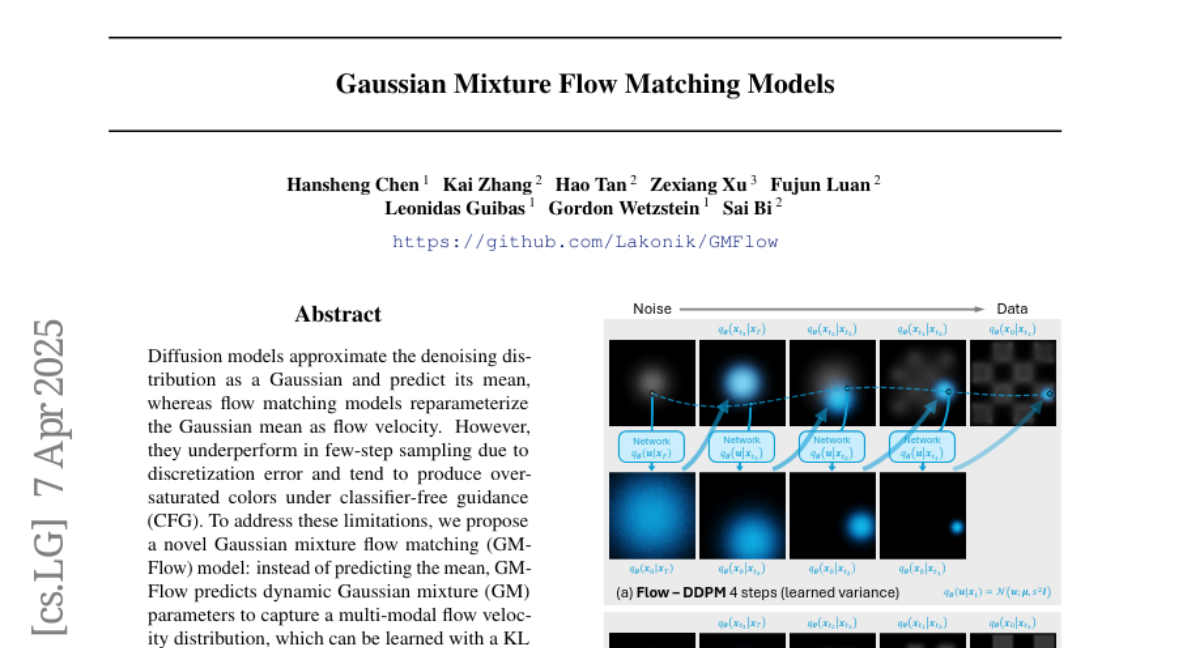

Diffusion models approximate the denoising distribution as a Gaussian and predict its mean, whereas flow matching models reparameterize the Gaussian mean as flow velocity. However, they underperform in few-step sampling due to discretization error and tend to produce over-saturated colors under classifier-free guidance (CFG). To address these limitations, we propose a novel Gaussian mixture flow matching (GMFlow) model: instead of predicting the mean, GMFlow predicts dynamic Gaussian mixture (GM) parameters to capture a multi-modal flow velocity distribution, which can be learned with a KL divergence loss. We demonstrate that GMFlow generalizes previous diffusion and flow matching models where a single Gaussian is learned with an L_2 denoising loss. For inference, we derive GM-SDE/ODE solvers that leverage analytic denoising distributions and velocity fields for precise few-step sampling. Furthermore, we introduce a novel probabilistic guidance scheme that mitigates the over-saturation issues of CFG and improves image generation quality. Extensive experiments demonstrate that GMFlow consistently outperforms flow matching baselines in generation quality, achieving a Precision of 0.942 with only 6 sampling steps on ImageNet 256times256.