GenCA: A Text-conditioned Generative Model for Realistic and Drivable Codec Avatars

Keqiang Sun, Amin Jourabloo, Riddhish Bhalodia, Moustafa Meshry, Yu Rong, Zhengyu Yang, Thu Nguyen-Phuoc, Christian Haene, Jiu Xu, Sam Johnson, Hongsheng Li, Sofien Bouaziz

2024-08-28

Summary

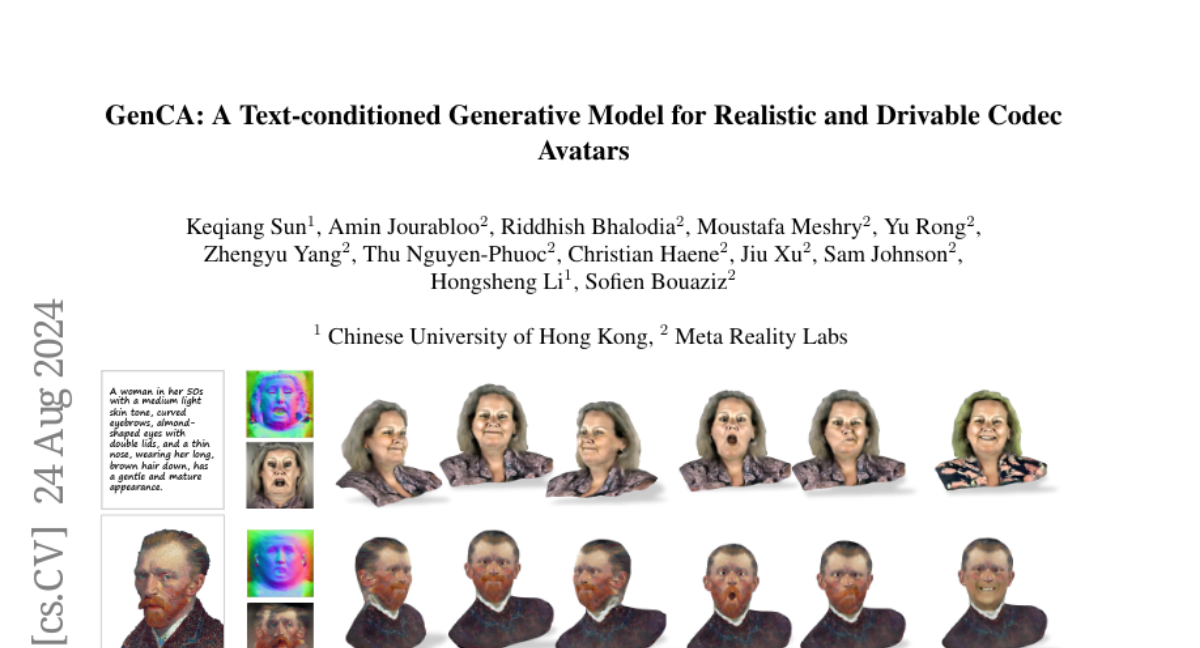

This paper introduces GenCA, a new model that creates realistic and controllable 3D avatars based on text descriptions, which can be used in various applications like gaming and virtual reality.

What's the problem?

Creating high-quality 3D avatars typically requires a lot of time and effort, often involving complicated scanning processes for each avatar. This makes it hard to quickly produce new avatars or modify existing ones. Current methods also struggle to create realistic details and allow for dynamic expressions.

What's the solution?

GenCA addresses these issues by using a generative model that learns from data to create photo-realistic avatars with detailed features like hair and facial expressions. It allows users to control these avatars through text inputs, making it easy to generate new identities or modify existing ones. The model combines the strengths of generative techniques with advanced editing capabilities, enabling high-quality avatar creation without the need for extensive manual work.

Why it matters?

This research is important because it simplifies the process of creating realistic 3D avatars, making them more accessible for use in video games, virtual reality experiences, and film production. By improving how avatars are generated and controlled, GenCA can enhance user experiences in various digital environments.

Abstract

Photo-realistic and controllable 3D avatars are crucial for various applications such as virtual and mixed reality (VR/MR), telepresence, gaming, and film production. Traditional methods for avatar creation often involve time-consuming scanning and reconstruction processes for each avatar, which limits their scalability. Furthermore, these methods do not offer the flexibility to sample new identities or modify existing ones. On the other hand, by learning a strong prior from data, generative models provide a promising alternative to traditional reconstruction methods, easing the time constraints for both data capture and processing. Additionally, generative methods enable downstream applications beyond reconstruction, such as editing and stylization. Nonetheless, the research on generative 3D avatars is still in its infancy, and therefore current methods still have limitations such as creating static avatars, lacking photo-realism, having incomplete facial details, or having limited drivability. To address this, we propose a text-conditioned generative model that can generate photo-realistic facial avatars of diverse identities, with more complete details like hair, eyes and mouth interior, and which can be driven through a powerful non-parametric latent expression space. Specifically, we integrate the generative and editing capabilities of latent diffusion models with a strong prior model for avatar expression driving. Our model can generate and control high-fidelity avatars, even those out-of-distribution. We also highlight its potential for downstream applications, including avatar editing and single-shot avatar reconstruction.