GenDoP: Auto-regressive Camera Trajectory Generation as a Director of Photography

Mengchen Zhang, Tong Wu, Jing Tan, Ziwei Liu, Gordon Wetzstein, Dahua Lin

2025-04-10

Summary

This paper talks about GenDoP, an AI tool that creates realistic camera movements for videos by learning from professional film examples, making scenes look like they were shot by expert cinematographers.

What's the problem?

Current methods for planning camera movements in videos either use rigid math rules or AI that can’t follow creative instructions well, leading to stiff or off-target shots.

What's the solution?

GenDoP uses a special AI model trained on thousands of real movie clips and expert notes to generate smooth, creative camera paths that match text descriptions, like ‘slow zoom on the hero’s face’.

Why it matters?

This helps filmmakers and content creators make videos with professional-looking camera work faster and cheaper, especially for animations, games, or virtual reality scenes.

Abstract

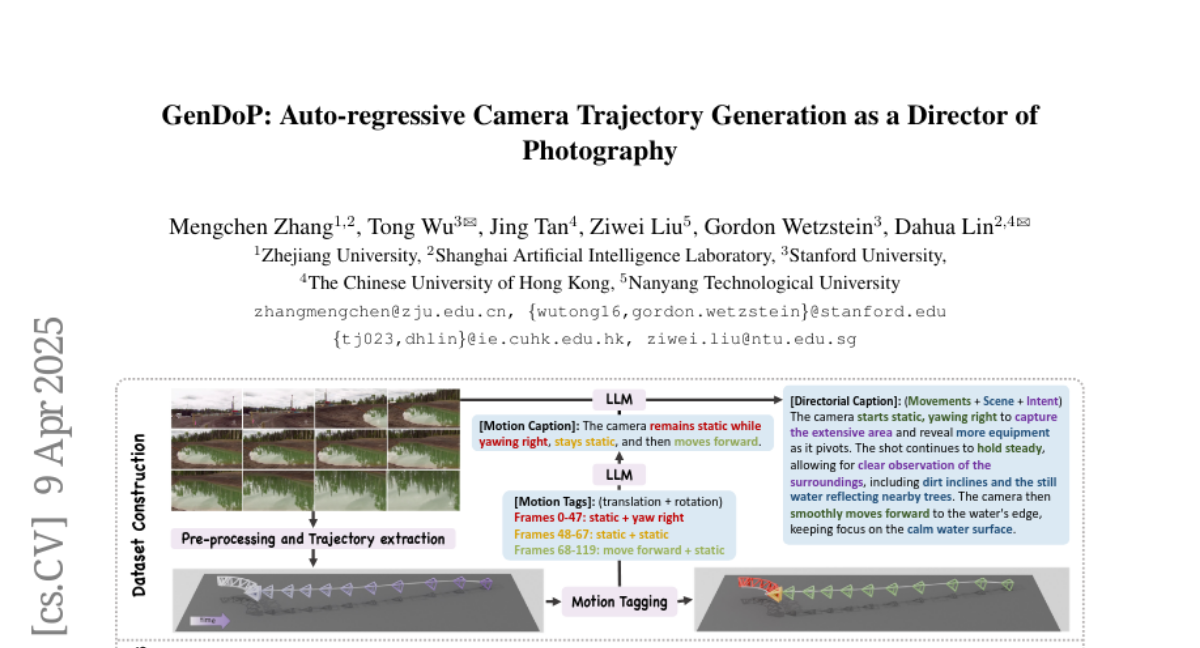

Camera trajectory design plays a crucial role in video production, serving as a fundamental tool for conveying directorial intent and enhancing visual storytelling. In cinematography, Directors of Photography meticulously craft camera movements to achieve expressive and intentional framing. However, existing methods for camera trajectory generation remain limited: Traditional approaches rely on geometric optimization or handcrafted procedural systems, while recent learning-based methods often inherit structural biases or lack textual alignment, constraining creative synthesis. In this work, we introduce an auto-regressive model inspired by the expertise of Directors of Photography to generate artistic and expressive camera trajectories. We first introduce DataDoP, a large-scale multi-modal dataset containing 29K real-world shots with free-moving camera trajectories, depth maps, and detailed captions in specific movements, interaction with the scene, and directorial intent. Thanks to the comprehensive and diverse database, we further train an auto-regressive, decoder-only Transformer for high-quality, context-aware camera movement generation based on text guidance and RGBD inputs, named GenDoP. Extensive experiments demonstrate that compared to existing methods, GenDoP offers better controllability, finer-grained trajectory adjustments, and higher motion stability. We believe our approach establishes a new standard for learning-based cinematography, paving the way for future advancements in camera control and filmmaking. Our project website: https://kszpxxzmc.github.io/GenDoP/.