Generalized Few-shot 3D Point Cloud Segmentation with Vision-Language Model

Zhaochong An, Guolei Sun, Yun Liu, Runjia Li, Junlin Han, Ender Konukoglu, Serge Belongie

2025-03-24

Summary

This paper is about improving AI's ability to recognize different parts of 3D objects, even when it only has a few examples to learn from.

What's the problem?

AI models struggle to accurately identify parts of 3D objects when they are trained with only a limited number of examples, especially for new types of objects they haven't seen before.

What's the solution?

The researchers developed a new method that combines information from a large AI model with the limited examples to help the AI better understand and identify the different parts of the object.

Why it matters?

This work matters because it can lead to AI systems that are better at understanding and interacting with the 3D world around us, which is important for applications like robotics and self-driving cars.

Abstract

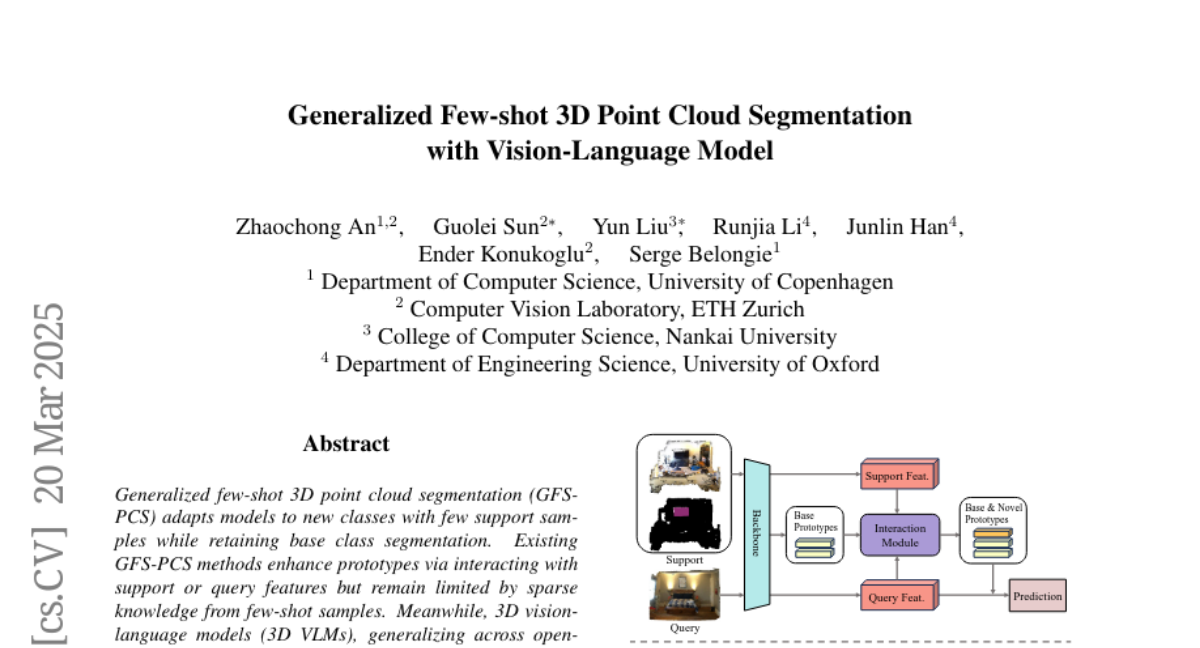

Generalized few-shot 3D point cloud segmentation (GFS-PCS) adapts models to new classes with few support samples while retaining base class segmentation. Existing GFS-PCS methods enhance prototypes via interacting with support or query features but remain limited by sparse knowledge from few-shot samples. Meanwhile, 3D vision-language models (3D VLMs), generalizing across open-world novel classes, contain rich but noisy novel class knowledge. In this work, we introduce a GFS-PCS framework that synergizes dense but noisy pseudo-labels from 3D VLMs with precise yet sparse few-shot samples to maximize the strengths of both, named GFS-VL. Specifically, we present a prototype-guided pseudo-label selection to filter low-quality regions, followed by an adaptive infilling strategy that combines knowledge from pseudo-label contexts and few-shot samples to adaptively label the filtered, unlabeled areas. Additionally, we design a novel-base mix strategy to embed few-shot samples into training scenes, preserving essential context for improved novel class learning. Moreover, recognizing the limited diversity in current GFS-PCS benchmarks, we introduce two challenging benchmarks with diverse novel classes for comprehensive generalization evaluation. Experiments validate the effectiveness of our framework across models and datasets. Our approach and benchmarks provide a solid foundation for advancing GFS-PCS in the real world. The code is at https://github.com/ZhaochongAn/GFS-VL