GeometryCrafter: Consistent Geometry Estimation for Open-world Videos with Diffusion Priors

Tian-Xing Xu, Xiangjun Gao, Wenbo Hu, Xiaoyu Li, Song-Hai Zhang, Ying Shan

2025-04-02

Summary

This paper introduces a new method for creating accurate 3D models from regular videos, even if the videos are shaky or taken in different environments.

What's the problem?

Existing methods for creating 3D models from videos often struggle to maintain accurate shapes and can be unreliable.

What's the solution?

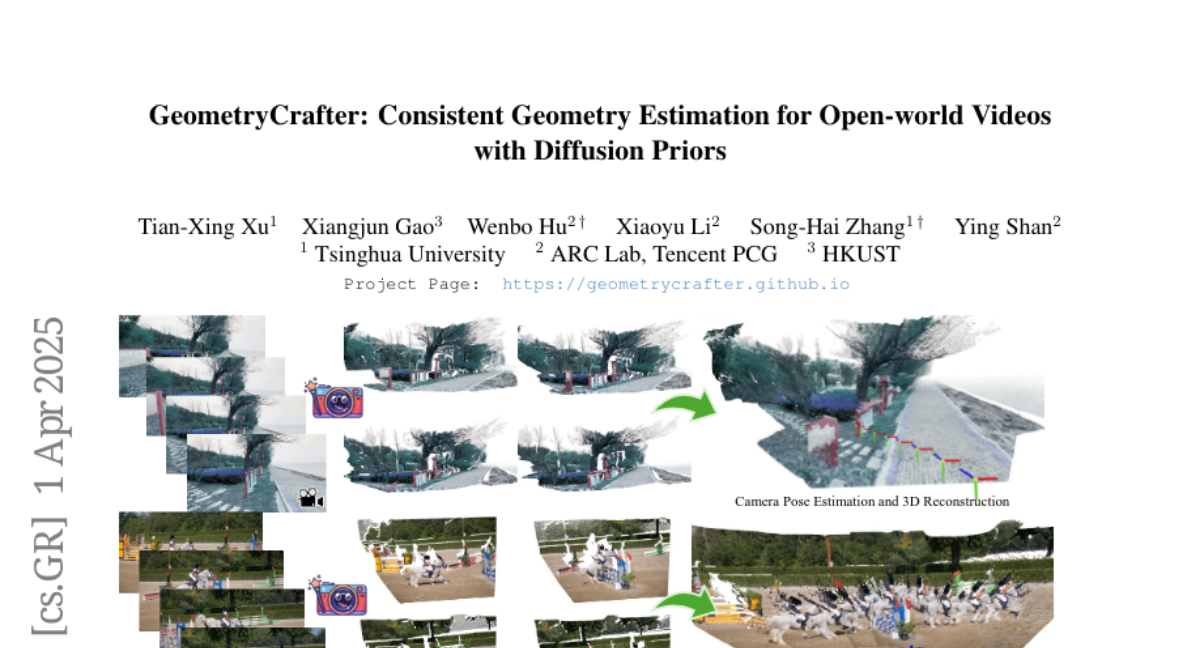

The researchers developed a system called GeometryCrafter that uses AI to create realistic and consistent 3D models from videos.

Why it matters?

This work matters because it can lead to more accurate 3D reconstructions for applications like virtual reality and robotics.

Abstract

Despite remarkable advancements in video depth estimation, existing methods exhibit inherent limitations in achieving geometric fidelity through the affine-invariant predictions, limiting their applicability in reconstruction and other metrically grounded downstream tasks. We propose GeometryCrafter, a novel framework that recovers high-fidelity point map sequences with temporal coherence from open-world videos, enabling accurate 3D/4D reconstruction, camera parameter estimation, and other depth-based applications. At the core of our approach lies a point map Variational Autoencoder (VAE) that learns a latent space agnostic to video latent distributions for effective point map encoding and decoding. Leveraging the VAE, we train a video diffusion model to model the distribution of point map sequences conditioned on the input videos. Extensive evaluations on diverse datasets demonstrate that GeometryCrafter achieves state-of-the-art 3D accuracy, temporal consistency, and generalization capability.