GIE-Bench: Towards Grounded Evaluation for Text-Guided Image Editing

Yusu Qian, Jiasen Lu, Tsu-Jui Fu, Xinze Wang, Chen Chen, Yinfei Yang, Wenze Hu, Zhe Gan

2025-05-19

Summary

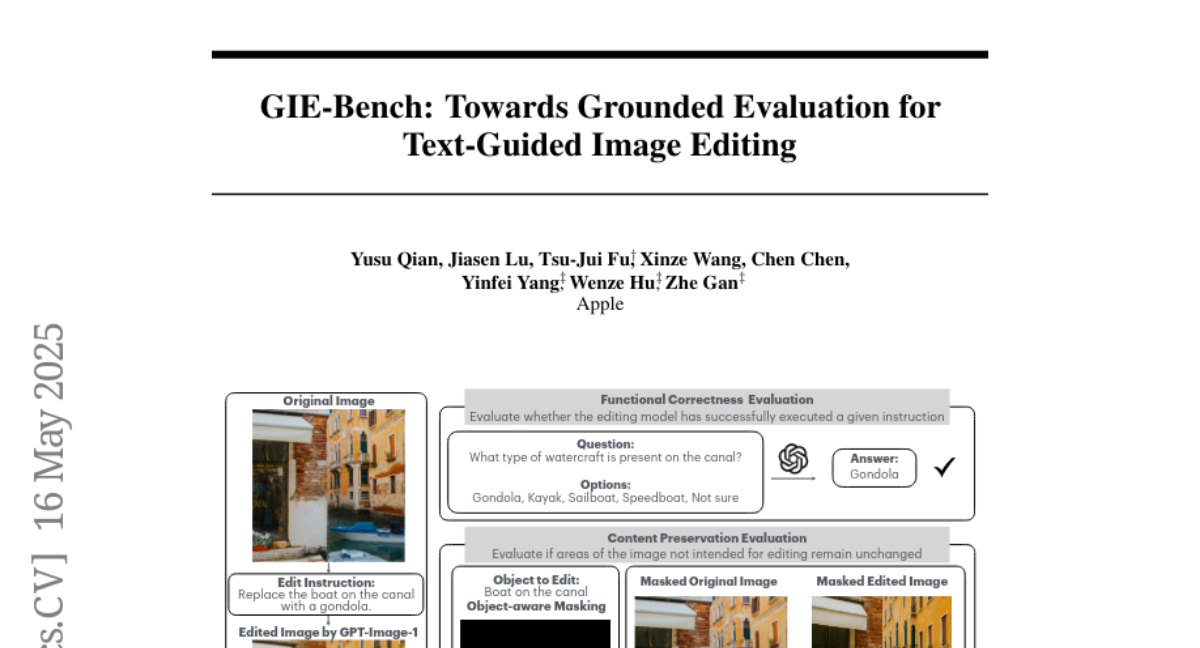

This paper introduces GIE-Bench, a new way to test how well computer programs can edit images based on what people say in text instructions.

What's the problem?

The main problem is that it's hard to tell if these image editing programs are actually doing what people ask them to do, and if they're keeping the important parts of the original image the same.

What's the solution?

To solve this, the researchers created a special test, or benchmark, that judges these programs by checking if the edits match what the text asks for and if the original image content is preserved. They use both computer-based scoring and real people's opinions to make sure the results are fair and accurate.

Why it matters?

This matters because as these editing programs get used more in real life, like for making art or editing photos, we need to be sure they work correctly and don't mess up the images in unexpected ways.

Abstract

A new benchmark evaluates text-guided image editing models for functional correctness and image content preservation using automatic metrics and human ratings.