GigaTok: Scaling Visual Tokenizers to 3 Billion Parameters for Autoregressive Image Generation

Tianwei Xiong, Jun Hao Liew, Zilong Huang, Jiashi Feng, Xihui Liu

2025-04-14

Summary

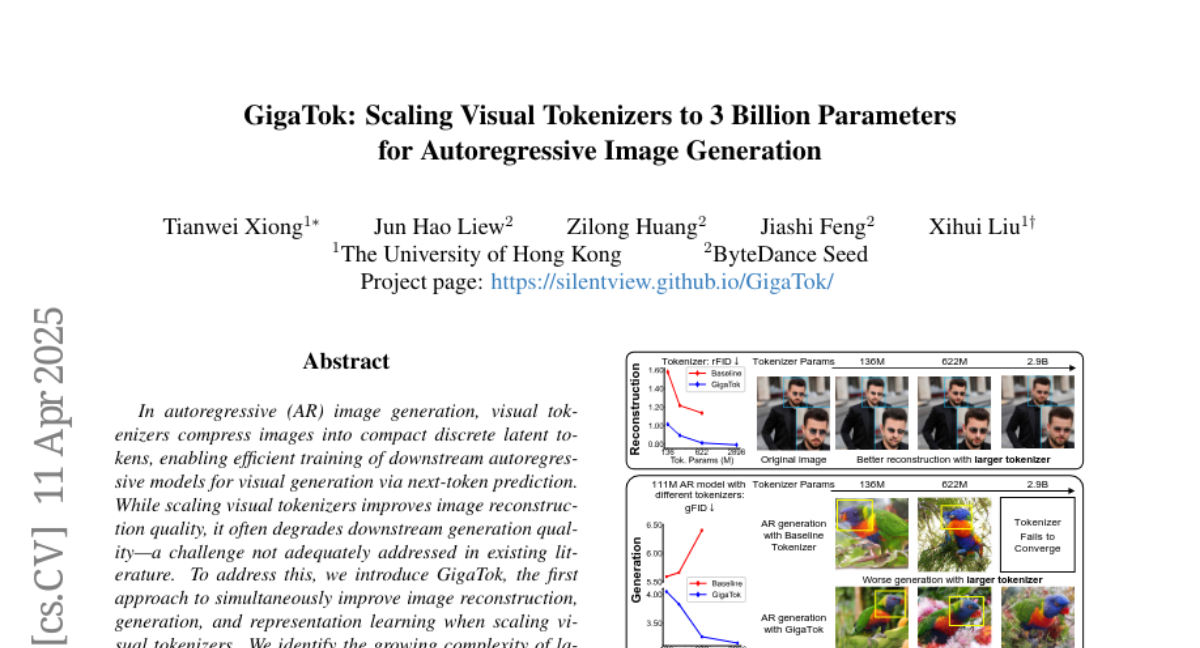

This paper talks about GigaTok, a new system for turning images into tokens (like pieces of a puzzle) that computers can use to create, understand, and recreate images more accurately. GigaTok is much bigger and smarter than previous systems, with 3 billion parameters, which helps it do a better job at these tasks.

What's the problem?

The problem is that current visual tokenizers, which break down images so AI models can work with them, often lose important details or don't represent images well enough. This makes it harder for AI to generate high-quality images or understand what's in a picture, which limits how good these systems can be.

What's the solution?

GigaTok solves this by making the tokenizer much larger and using special techniques to make sure it pays attention to the meaning of the images, not just the raw data. With 3 billion parameters and careful design choices, GigaTok can turn images into tokens in a way that keeps more of the important information, leading to better image generation and understanding.

Why it matters?

This work matters because it helps AI models create and understand images at a much higher quality. With better visual tokenizers like GigaTok, computers can be more creative, accurate, and useful in areas like art, design, and even scientific research.

Abstract

GigaTok improves image reconstruction, generation, and representation quality by scaling visual tokenizers with semantic regularization and specific architectural practices.