Global-Local Tree Search for Language Guided 3D Scene Generation

Wei Deng, Mengshi Qi, Huadong Ma

2025-03-25

Summary

This paper is about using AI to automatically create 3D scenes based on text descriptions, like designing the layout of a room.

What's the problem?

It's hard for AI to create realistic and logical 3D scenes because it needs to understand spatial relationships and common-sense rules about how objects are placed.

What's the solution?

The researchers developed a new method that uses a tree-like structure to explore different arrangements of objects in the scene, breaking down the problem into smaller, more manageable steps. It also uses a language model to choose object positions based on emoji prompts.

Why it matters?

This work matters because it can automate the creation of 3D environments, which is useful for things like video games, virtual reality, and architectural design.

Abstract

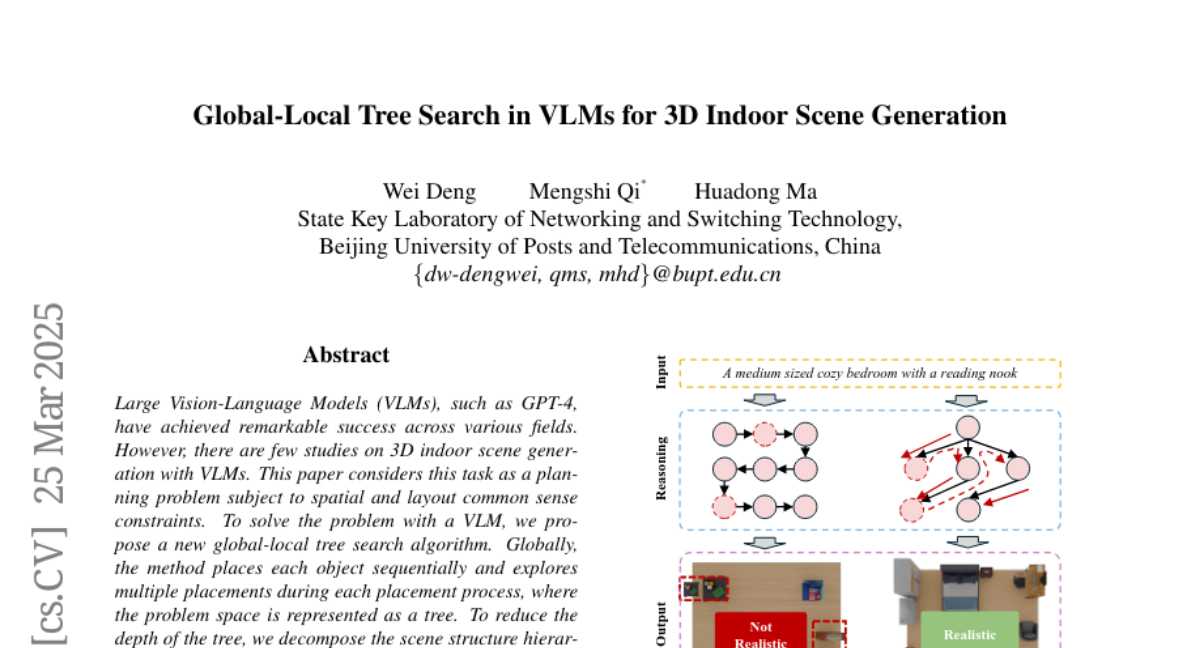

Large Vision-Language Models (VLMs), such as GPT-4, have achieved remarkable success across various fields. However, there are few studies on 3D indoor scene generation with VLMs. This paper considers this task as a planning problem subject to spatial and layout common sense constraints. To solve the problem with a VLM, we propose a new global-local tree search algorithm. Globally, the method places each object sequentially and explores multiple placements during each placement process, where the problem space is represented as a tree. To reduce the depth of the tree, we decompose the scene structure hierarchically, i.e. room level, region level, floor object level, and supported object level. The algorithm independently generates the floor objects in different regions and supported objects placed on different floor objects. Locally, we also decompose the sub-task, the placement of each object, into multiple steps. The algorithm searches the tree of problem space. To leverage the VLM model to produce positions of objects, we discretize the top-down view space as a dense grid and fill each cell with diverse emojis to make to cells distinct. We prompt the VLM with the emoji grid and the VLM produces a reasonable location for the object by describing the position with the name of emojis. The quantitative and qualitative experimental results illustrate our approach generates more plausible 3D scenes than state-of-the-art approaches. Our source code is available at https://github.com/dw-dengwei/TreeSearchGen .