Goal Alignment in LLM-Based User Simulators for Conversational AI

Shuhaib Mehri, Xiaocheng Yang, Takyoung Kim, Gokhan Tur, Shikib Mehri, Dilek Hakkani-Tür

2025-07-29

Summary

This paper talks about User Goal State Tracking (UGST), a new way to help AI user simulators stay focused on what the user wants to achieve during conversations, making their behavior more accurate and goal-aligned.

What's the problem?

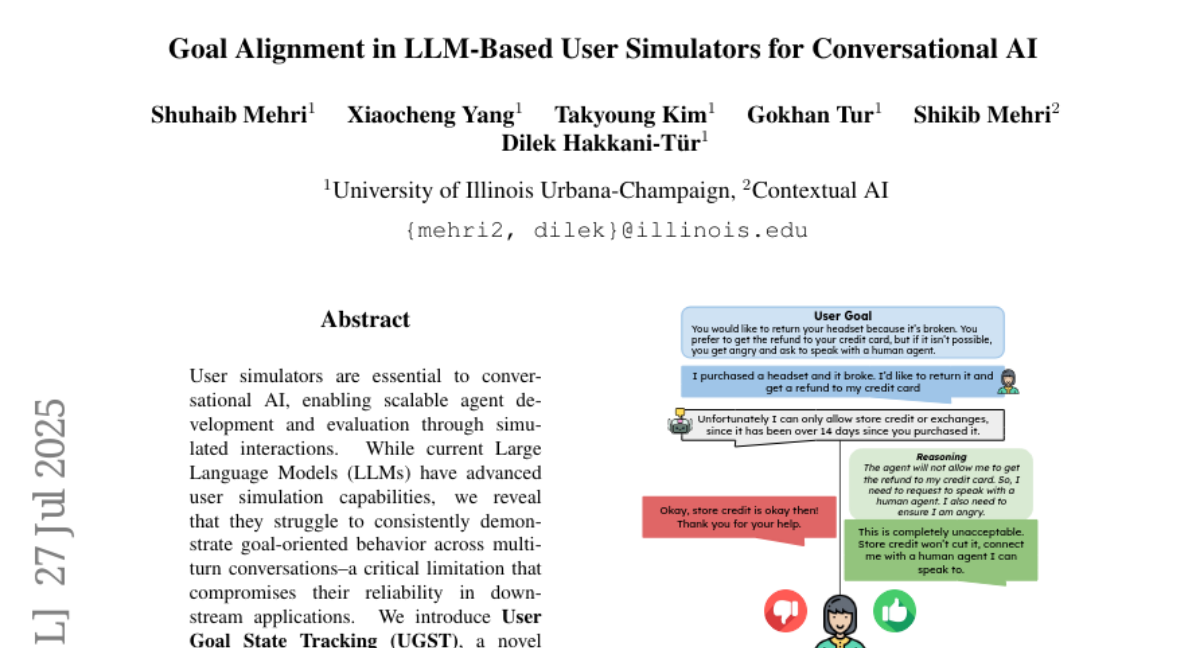

The problem is that current AI user simulators often struggle to keep track of their goals throughout long conversations, leading them to give responses that don’t clearly follow or achieve the user’s main objectives. This causes unreliable and less useful interactions.

What's the solution?

UGST solves this by breaking the user’s goal into smaller parts and tracking the progress of each part in real time during the conversation. The model is trained to use this tracking information to generate responses that better align with the user’s goals. The approach also includes methods to fine-tune and improve the simulator using a three-stage process that encourages clear reasoning and continuous goal tracking.

Why it matters?

This matters because better goal alignment in user simulators leads to more realistic and effective conversational AI systems. These improved simulators can help build smarter chatbots and virtual assistants that understand and achieve what users want more reliably.

Abstract

A novel framework, User Goal State Tracking (UGST), is introduced to improve goal-aligned behavior in user simulators for conversational AI, demonstrating significant improvements in goal alignment across benchmarks.