GPS as a Control Signal for Image Generation

Chao Feng, Ziyang Chen, Aleksander Holynski, Alexei A. Efros, Andrew Owens

2025-01-22

Summary

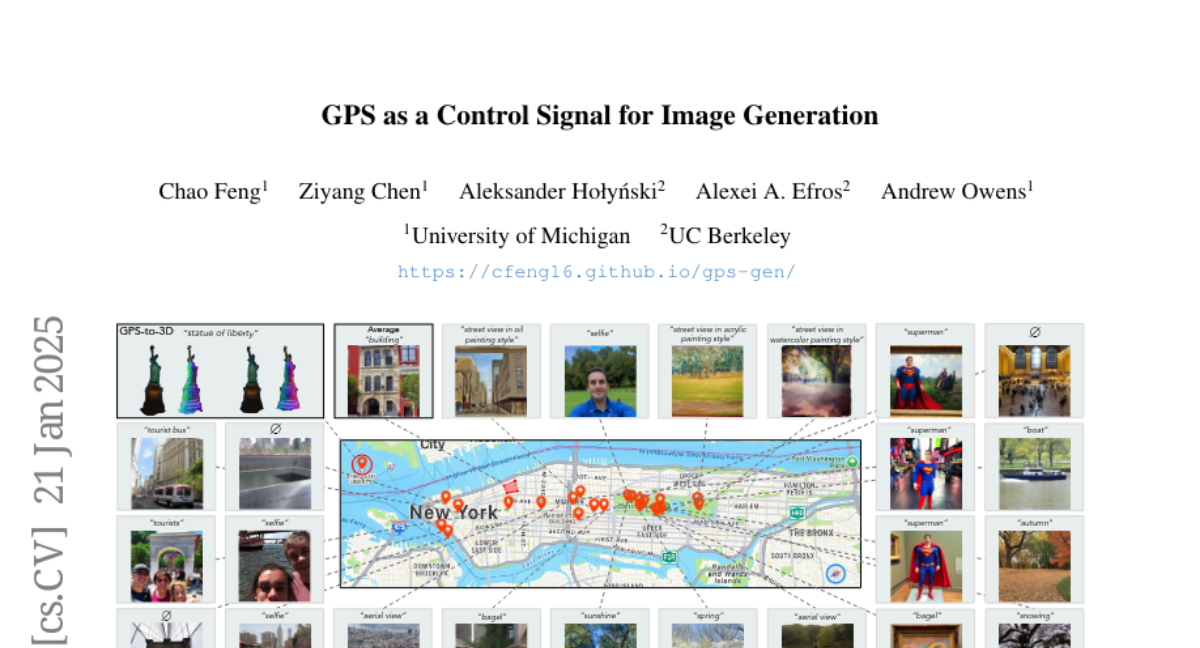

This paper talks about a new way to create realistic images of different places using GPS information. The researchers developed an AI system that can generate pictures of specific locations, like neighborhoods or landmarks, just by using GPS coordinates and text descriptions.

What's the problem?

Current AI systems that generate images often struggle to create accurate and detailed pictures of specific locations. They might mix up the appearance of different neighborhoods or landmarks within a city. This makes it hard to use AI for tasks that need a good understanding of how places look from different viewpoints.

What's the solution?

The researchers created a special AI model that learns from GPS tags in photo data. They trained this model to understand how images change across different locations in a city. The model can generate images based on both GPS coordinates and text descriptions. This allows it to create pictures that show the unique features of different areas, like parks or famous buildings. They also figured out how to use this 2D image generation system to create 3D models of places, which is pretty impressive.

Why it matters?

This matters because it could change how we use AI in many fields. For example, it could help create virtual tours of cities, improve map applications, or assist in urban planning. It could also be useful for making more realistic video games or movies set in real places. The ability to generate accurate 3D models from 2D images and GPS data could be especially valuable for fields like architecture or archaeology. Overall, this technology opens up new possibilities for how we can visualize and interact with geographic information using AI.

Abstract

We show that the GPS tags contained in photo metadata provide a useful control signal for image generation. We train GPS-to-image models and use them for tasks that require a fine-grained understanding of how images vary within a city. In particular, we train a diffusion model to generate images conditioned on both GPS and text. The learned model generates images that capture the distinctive appearance of different neighborhoods, parks, and landmarks. We also extract 3D models from 2D GPS-to-image models through score distillation sampling, using GPS conditioning to constrain the appearance of the reconstruction from each viewpoint. Our evaluations suggest that our GPS-conditioned models successfully learn to generate images that vary based on location, and that GPS conditioning improves estimated 3D structure.