Group-robust Machine Unlearning

Thomas De Min, Subhankar Roy, Stéphane Lathuilière, Elisa Ricci, Massimiliano Mancini

2025-03-17

Summary

This paper addresses a problem in machine learning where models unfairly perform worse on certain groups after 'forgetting' specific data.

What's the problem?

When machine learning models are asked to 'unlearn' data, they might disproportionately hurt the performance for specific groups within the data, especially if the data to be unlearned is mostly from one group. This creates unfairness.

What's the solution?

The researchers propose a method called MIU (Mutual Information-aware Machine Unlearning) that minimizes the connection between the model's features and information about which group a data point belongs to. This helps the model forget data without significantly harming the performance on any particular group. They also use a technique called sample distribution reweighting.

Why it matters?

This work matters because it helps ensure that machine learning models are fair and don't discriminate against specific groups when asked to 'forget' certain information. This is important for building trustworthy AI systems.

Abstract

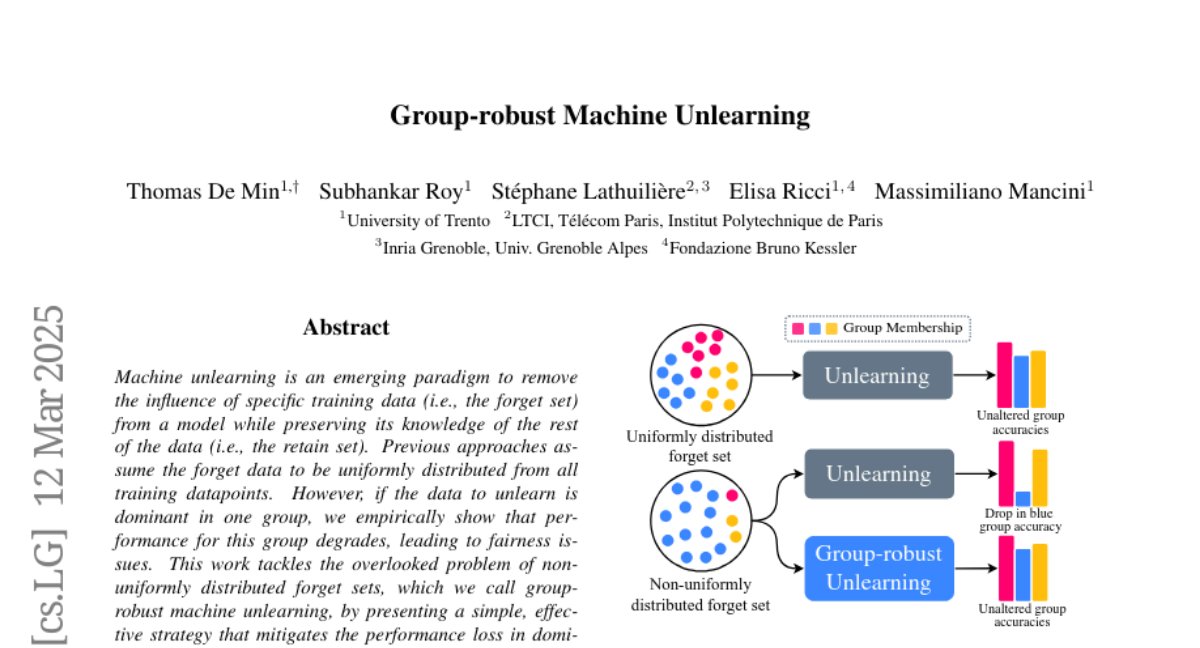

Machine unlearning is an emerging paradigm to remove the influence of specific training data (i.e., the forget set) from a model while preserving its knowledge of the rest of the data (i.e., the retain set). Previous approaches assume the forget data to be uniformly distributed from all training datapoints. However, if the data to unlearn is dominant in one group, we empirically show that performance for this group degrades, leading to fairness issues. This work tackles the overlooked problem of non-uniformly distributed forget sets, which we call group-robust machine unlearning, by presenting a simple, effective strategy that mitigates the performance loss in dominant groups via sample distribution reweighting. Moreover, we present MIU (Mutual Information-aware Machine Unlearning), the first approach for group robustness in approximate machine unlearning. MIU minimizes the mutual information between model features and group information, achieving unlearning while reducing performance degradation in the dominant group of the forget set. Additionally, MIU exploits sample distribution reweighting and mutual information calibration with the original model to preserve group robustness. We conduct experiments on three datasets and show that MIU outperforms standard methods, achieving unlearning without compromising model robustness. Source code available at https://github.com/tdemin16/group-robust_machine_unlearning.