HalluSegBench: Counterfactual Visual Reasoning for Segmentation Hallucination Evaluation

Xinzhuo Li, Adheesh Juvekar, Xingyou Liu, Muntasir Wahed, Kiet A. Nguyen, Ismini Lourentzou

2025-07-04

Summary

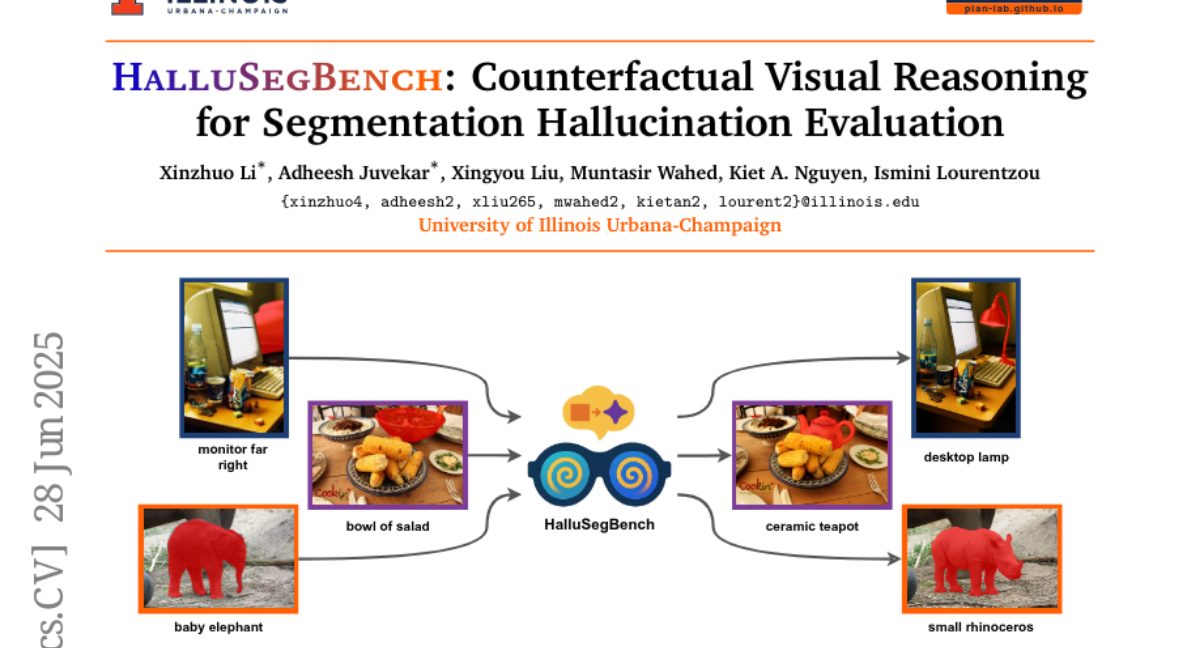

This paper talks about HalluSegBench, a new way to test how well vision-language segmentation models avoid hallucinating, which means incorrectly identifying objects that aren't really there in an image. It uses counterfactual visual reasoning by swapping objects in images to see if models really understand what they see.

What's the problem?

The problem is that current models sometimes mistakenly label parts of an image as objects that don't exist, or they confuse similar objects, causing errors in segmentation. Existing tests mostly focus on checking label mistakes without changing the visual content, so they miss important errors related to visual understanding.

What's the solution?

The researchers created HalluSegBench, a benchmark with pairs of images where a specific object is replaced with a similar but different object while keeping everything else the same. This controlled change tests if models rely on real visual clues or guess based on learned patterns. They developed new metrics to measure how sensitive models are to these changes and found that vision-driven hallucinations are more common than label mistakes.

Why it matters?

This matters because it helps identify and fix situations where AI models fail to accurately understand what they see, leading to better, more trustworthy models for tasks like image analysis, medical imaging, and autonomous systems.

Abstract

HalluSegBench evaluates vision-language segmentation models for hallucinations using counterfactual visual reasoning, revealing that vision-driven errors are more common than label-driven ones.