HLFormer: Enhancing Partially Relevant Video Retrieval with Hyperbolic Learning

Li Jun, Wang Jinpeng, Tan Chaolei, Lian Niu, Chen Long, Zhang Min, Wang Yaowei, Xia Shu-Tao, Chen Bin

2025-07-25

Summary

This paper talks about HLFormer, a system that improves how computers find videos related to a specific text by using a special kind of math called hyperbolic learning to better understand relationships between parts of a video and the text.

What's the problem?

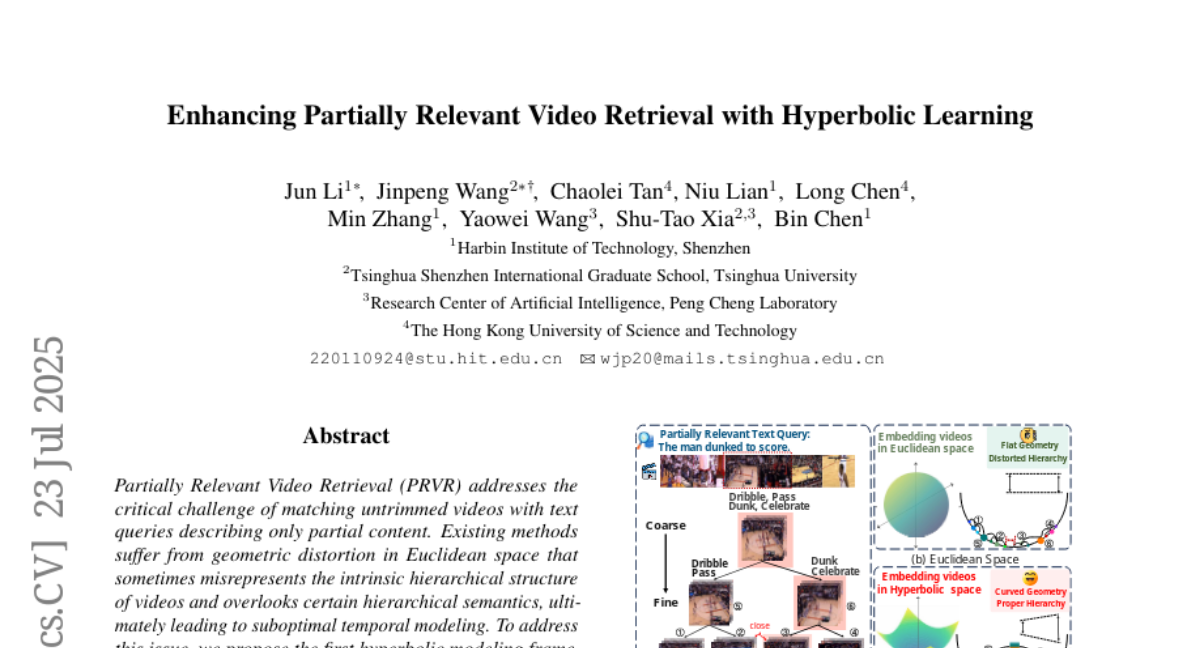

Matching videos to texts is hard when the texts only describe part of the video because current methods often treat the data as flat and miss how videos and texts can have layers of meaning and hierarchy, leading to weaker matching.

What's the solution?

The researchers created HLFormer, which combines hyperbolic and regular space attention techniques to capture the video’s layered structure and uses a new loss function to keep the correct order between text and video parts, making the retrieval more accurate for partially relevant content.

Why it matters?

This matters because it helps systems find the right part of long videos based on text queries more effectively, improving search and recommendation in video platforms.

Abstract

HLFormer, a hyperbolic modeling framework, improves video retrieval by integrating Lorentz and Euclidean attention blocks and enforcing partial order preservation in hybrid spaces.