HoT: Highlighted Chain of Thought for Referencing Supporting Facts from Inputs

Tin Nguyen, Logan Bolton, Mohammad Reza Taesiri, Anh Totti Nguyen

2025-03-06

Summary

This paper talks about a new method called Highlighted Chain-of-Thought Prompting (HoT) that helps AI language models give more accurate answers and makes it easier for people to check if those answers are correct

What's the problem?

AI language models sometimes make up false information, and it's hard for people to tell which parts of an AI's answer are true and which are made up. This can lead to people making decisions based on wrong information

What's the solution?

The researchers created HoT, which makes the AI highlight the facts it uses from the original question in its answer. This is like showing its work in math class. They tested HoT on 17 different types of tasks and found it often worked better than previous methods. When they asked people to check the AI's answers, the highlights helped them spot correct answers faster

Why it matters?

This matters because it could make AI language models more trustworthy and useful in real-world situations. By making it easier for people to verify AI answers, HoT could help prevent the spread of false information and make AI a more reliable tool for decision-making in fields like education, research, and business

Abstract

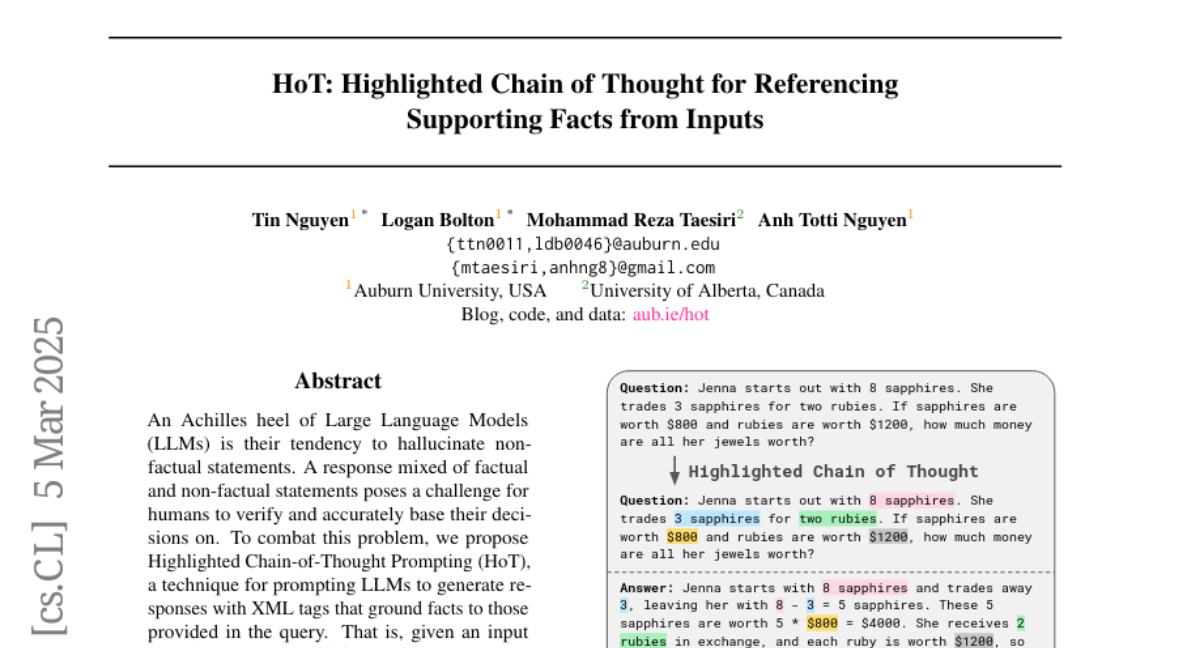

An Achilles heel of Large Language Models (LLMs) is their tendency to hallucinate non-factual statements. A response mixed of factual and non-factual statements poses a challenge for humans to verify and accurately base their decisions on. To combat this problem, we propose Highlighted Chain-of-Thought Prompting (HoT), a technique for prompting LLMs to generate responses with XML tags that ground facts to those provided in the query. That is, given an input question, LLMs would first re-format the question to add XML tags highlighting key facts, and then, generate a response with highlights over the facts referenced from the input. Interestingly, in few-shot settings, HoT outperforms vanilla chain of thought prompting (CoT) on a wide range of 17 tasks from arithmetic, reading comprehension to logical reasoning. When asking humans to verify LLM responses, highlights help time-limited participants to more accurately and efficiently recognize when LLMs are correct. Yet, surprisingly, when LLMs are wrong, HoTs tend to make users believe that an answer is correct.