How Do Large Vision-Language Models See Text in Image? Unveiling the Distinctive Role of OCR Heads

Ingeol Baek, Hwan Chang, Sunghyun Ryu, Hwanhee Lee

2025-05-23

Summary

This paper talks about how large vision-language models, which are AIs that can understand both pictures and words, actually read and make sense of text that appears inside images.

What's the problem?

The problem is that it's not clear how these models process and understand written words that are part of a picture, which is important for tasks like reading signs, labels, or documents in photos.

What's the solution?

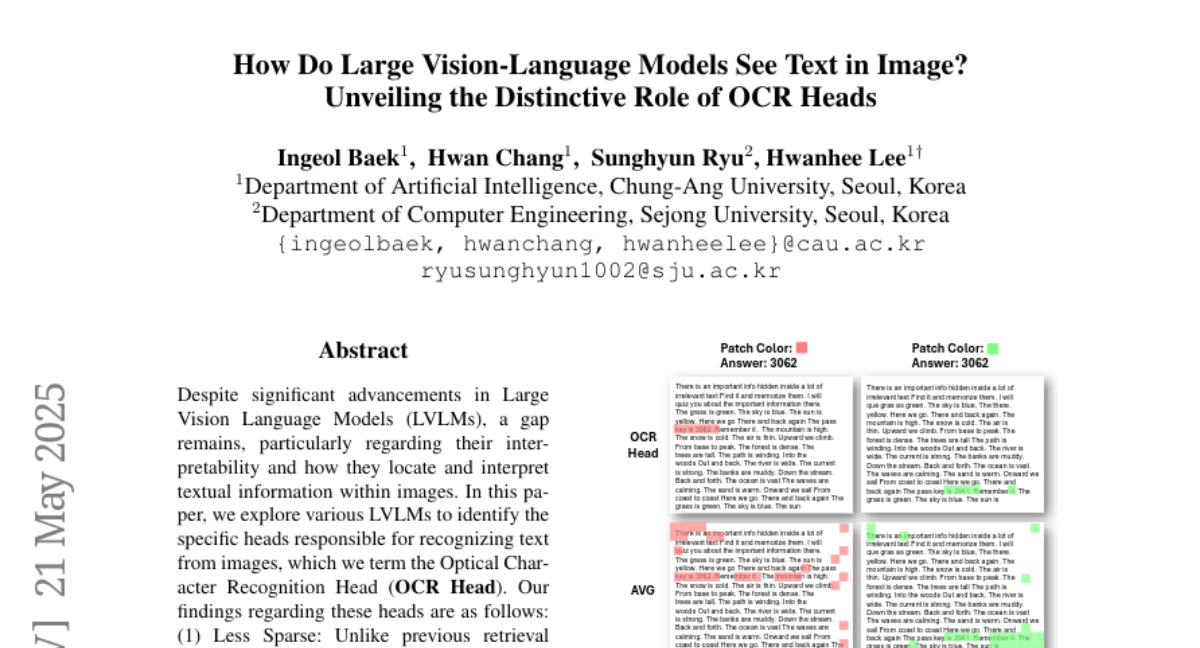

The researchers focused on something called OCR Heads within these models, which are special parts designed to recognize text. They studied how these OCR Heads work and found out that they have unique ways of activating and interpreting text in images, which is different from how the rest of the model works.

Why it matters?

This matters because understanding exactly how AIs read text in images can help improve their accuracy and reliability, making them more useful for things like helping visually impaired people, reading documents, or even translating signs in different languages.

Abstract

The study identifies and analyzes OCR Heads within Large Vision Language Models, revealing their unique activation patterns and roles in interpreting text within images.