How Well Does GPT-4o Understand Vision? Evaluating Multimodal Foundation Models on Standard Computer Vision Tasks

Rahul Ramachandran, Ali Garjani, Roman Bachmann, Andrei Atanov, Oğuzhan Fatih Kar, Amir Zamir

2025-07-07

Summary

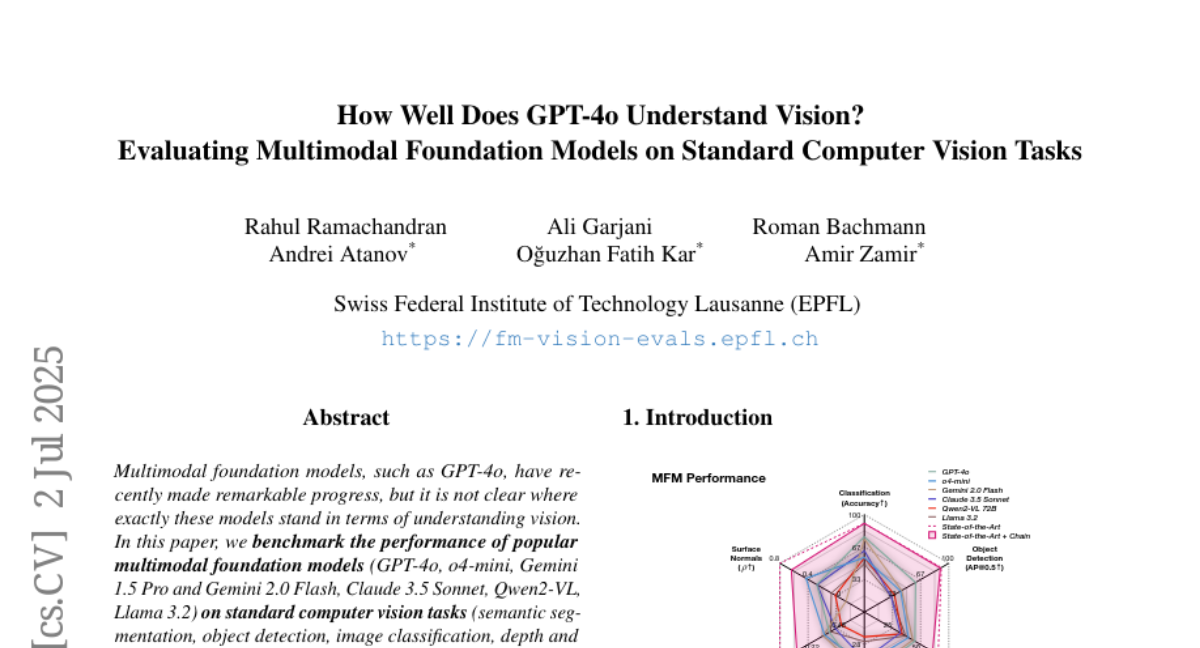

This paper talks about how well popular multimodal foundation models, like GPT-4o, understand vision tasks when they are adapted using a technique called prompt chaining. These models can perform reasonably well on standard computer vision tasks like image recognition and segmentation.

What's the problem?

The problem is that while these large models can handle both text and images, they still do not match the performance of specialized computer vision models that are designed only for vision tasks. Additionally, they sometimes have strange behaviors in image generation and reasoning.

What's the solution?

The researchers evaluated the performance of these foundation models on common vision benchmarks by carefully chaining prompts that guide the models to use visual information effectively. They analyzed their strengths and weaknesses compared to specialized models, revealing the areas where the multimodal models struggle.

Why it matters?

This matters because understanding the capabilities and limits of multimodal models helps guide future improvements. It shows how far general AI models have come in handling vision tasks and highlights the need for more research to close the gap with specialized vision systems.

Abstract

Popular multimodal foundation models perform respectably across various computer vision tasks when adapted through prompt chaining, though they fall short of specialist models and exhibit quirks in image generation.