Human Motion Unlearning

Edoardo De Matteis, Matteo Migliarini, Alessio Sampieri, Indro Spinelli, Fabio Galasso

2025-03-25

Summary

This paper is about teaching AI to create animations of people moving, but also preventing it from creating animations that show harmful or inappropriate actions.

What's the problem?

AI can generate realistic human motions, but it might also create animations that show violence or other toxic behavior. It's hard to prevent this because toxic motions can be created on purpose or by combining safe motions in a harmful way.

What's the solution?

The researchers created a way to test if AI is generating toxic motions and developed a new method called Latent Code Replacement (LCR) to prevent the AI from creating these animations.

Why it matters?

This work matters because it helps ensure that AI-generated animations are safe and appropriate, which is important for applications like video games, virtual reality, and educational content.

Abstract

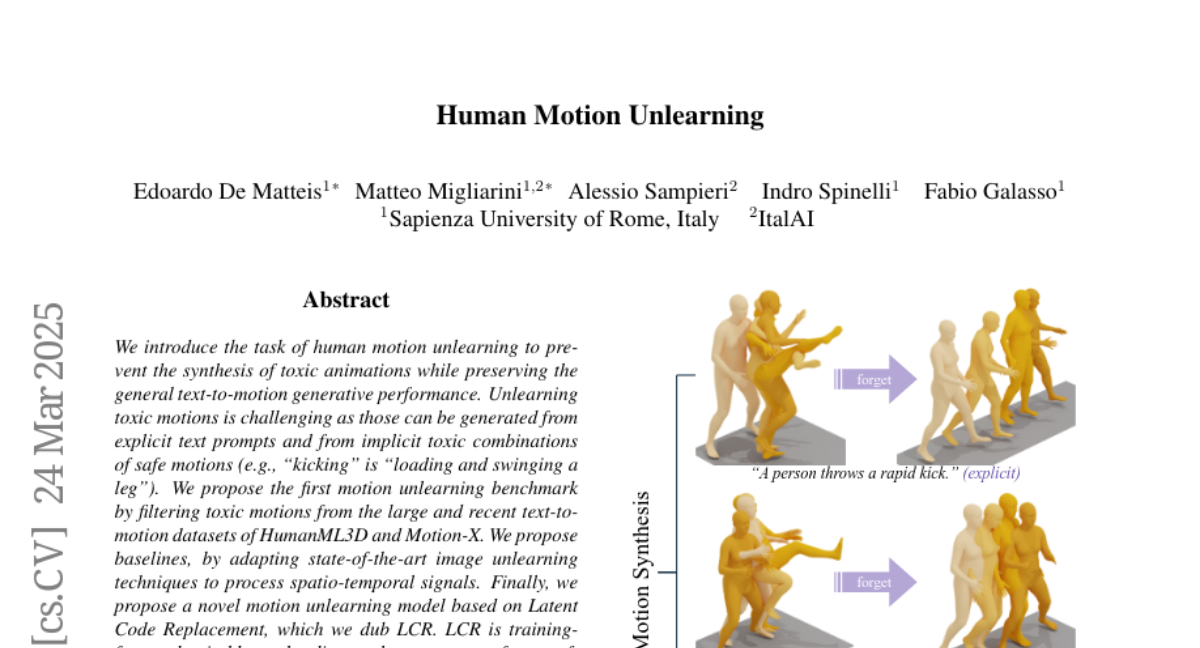

We introduce the task of human motion unlearning to prevent the synthesis of toxic animations while preserving the general text-to-motion generative performance. Unlearning toxic motions is challenging as those can be generated from explicit text prompts and from implicit toxic combinations of safe motions (e.g., ``kicking" is ``loading and swinging a leg"). We propose the first motion unlearning benchmark by filtering toxic motions from the large and recent text-to-motion datasets of HumanML3D and Motion-X. We propose baselines, by adapting state-of-the-art image unlearning techniques to process spatio-temporal signals. Finally, we propose a novel motion unlearning model based on Latent Code Replacement, which we dub LCR. LCR is training-free and suitable to the discrete latent spaces of state-of-the-art text-to-motion diffusion models. LCR is simple and consistently outperforms baselines qualitatively and quantitatively. Project page: https://www.pinlab.org/hmu{https://www.pinlab.org/hmu}.