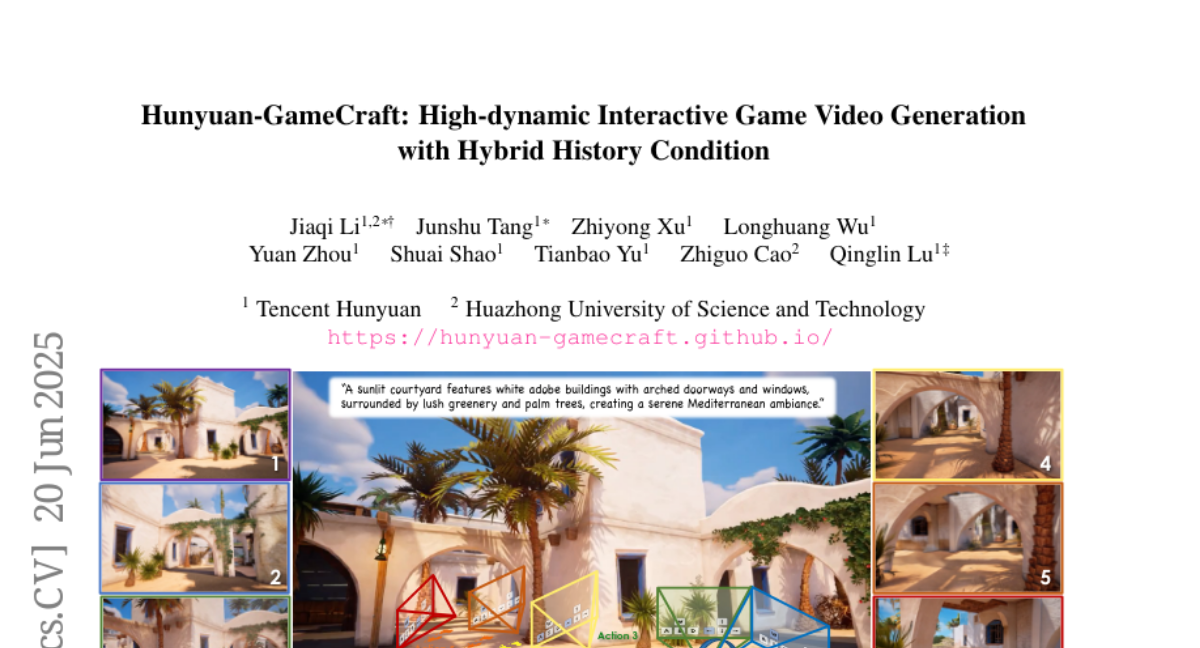

Hunyuan-GameCraft: High-dynamic Interactive Game Video Generation with Hybrid History Condition

Jiaqi Li, Junshu Tang, Zhiyong Xu, Longhuang Wu, Yuan Zhou, Shuai Shao, Tianbao Yu, Zhiguo Cao, Qinglin Lu

2025-06-23

Summary

This paper talks about Hunyuan-GameCraft, a new system that generates interactive, high-quality game videos where players can control movements and actions using standard keyboard and mouse inputs.

What's the problem?

The problem is that current game video generation methods struggle with creating dynamic, realistic movements, maintaining consistency over long videos, and being efficient enough for real-time use.

What's the solution?

The researchers developed a unified representation that combines keyboard and mouse controls into smooth camera and character movements, used a hybrid history-conditioned training to keep video scenes consistent over time, and applied model distillation to make the system faster and lighter for real-time gameplay. They trained the model on millions of gameplay videos from over 100 big games to ensure realism and diversity.

Why it matters?

This matters because it enables creating realistic and controllable game videos efficiently, helping game developers, video creators, and designers produce immersive interactive content faster and at a lower cost.

Abstract

Hunyuan-GameCraft is a novel framework for high-dynamic interactive video generation in game environments that addresses limitations in dynamics, generality, and efficiency through unified input representation, hybrid history-conditioned training, and model distillation.