HybriMoE: Hybrid CPU-GPU Scheduling and Cache Management for Efficient MoE Inference

Shuzhang Zhong, Yanfan Sun, Ling Liang, Runsheng Wang, Ru Huang, Meng Li

2025-04-09

Summary

This paper talks about HybriMoE, a smart system that helps AI models using Mixture of Experts (MoE) run faster by better using both computer processors (CPUs) and graphics cards (GPUs) together.

What's the problem?

Current AI systems using MoE struggle to run efficiently because they waste time moving data between computer parts and can't predict which specialized parts will be needed next.

What's the solution?

HybriMoE uses smart scheduling to balance work between CPUs and GPUs, predicts which parts to load next, and keeps frequently used parts ready to reduce delays.

Why it matters?

This makes AI tools like language translators or chatbots faster and more efficient, especially on regular computers or phones that have limited processing power.

Abstract

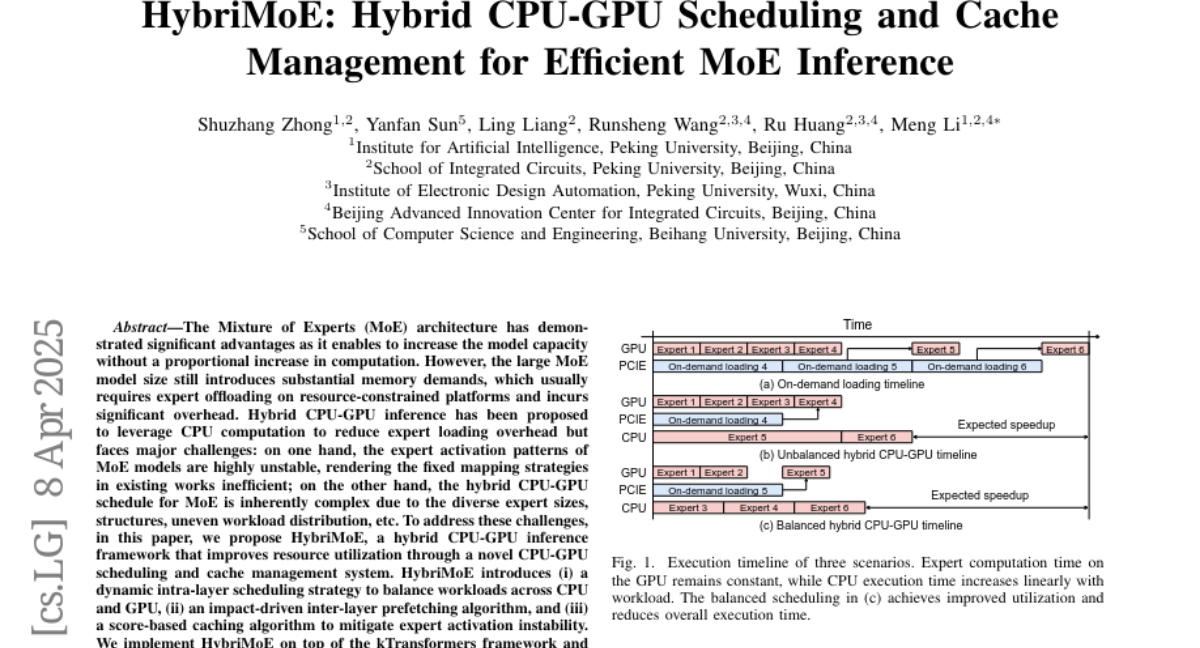

The Mixture of Experts (MoE) architecture has demonstrated significant advantages as it enables to increase the model capacity without a proportional increase in computation. However, the large MoE model size still introduces substantial memory demands, which usually requires expert offloading on resource-constrained platforms and incurs significant overhead. Hybrid CPU-GPU inference has been proposed to leverage CPU computation to reduce expert loading overhead but faces major challenges: on one hand, the expert activation patterns of MoE models are highly unstable, rendering the fixed mapping strategies in existing works inefficient; on the other hand, the hybrid CPU-GPU schedule for MoE is inherently complex due to the diverse expert sizes, structures, uneven workload distribution, etc. To address these challenges, in this paper, we propose HybriMoE, a hybrid CPU-GPU inference framework that improves resource utilization through a novel CPU-GPU scheduling and cache management system. HybriMoE introduces (i) a dynamic intra-layer scheduling strategy to balance workloads across CPU and GPU, (ii) an impact-driven inter-layer prefetching algorithm, and (iii) a score-based caching algorithm to mitigate expert activation instability. We implement HybriMoE on top of the kTransformers framework and evaluate it on three widely used MoE-based LLMs. Experimental results demonstrate that HybriMoE achieves an average speedup of 1.33times in the prefill stage and 1.70times in the decode stage compared to state-of-the-art hybrid MoE inference framework. Our code is available at: https://github.com/PKU-SEC-Lab/HybriMoE.