I-Con: A Unifying Framework for Representation Learning

Shaden Alshammari, John Hershey, Axel Feldmann, William T. Freeman, Mark Hamilton

2025-04-24

Summary

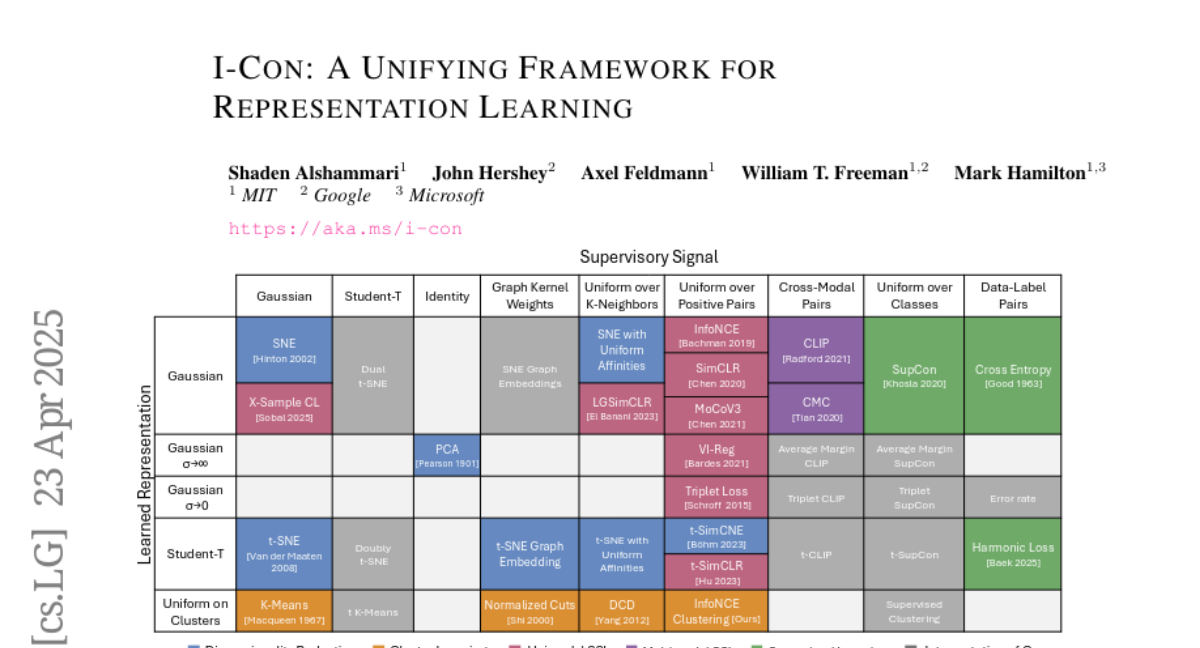

This paper talks about I-Con, a new system that brings together different ways of teaching computers to recognize and organize information, using a mathematical tool called KL divergence to compare what the computer learns with what it should learn.

What's the problem?

The problem is that there are many different methods and loss functions in machine learning for helping computers learn from data, but it's hard to know which one to use or how to combine them for the best results, especially when trying to classify images without labels or remove unwanted biases.

What's the solution?

The researchers created a unified framework where KL divergence acts as a bridge between what the computer is supposed to learn (the supervisory signal) and what it actually learns (the model's output). By measuring how different these two are, I-Con can generalize and improve several existing loss functions, making it easier to train models for tasks like unsupervised image classification and debiasing.

Why it matters?

This is important because it means machine learning models can be trained more effectively and flexibly, leading to better performance in organizing images and reducing bias, which are both crucial for making AI systems more accurate and fair.

Abstract

A unified framework using KL divergence between supervisory and learned representations generalizes multiple machine learning loss functions and improves unsupervised image classification and debiasing.