ICL CIPHERS: Quantifying "Learning'' in In-Context Learning via Substitution Ciphers

Zhouxiang Fang, Aayush Mishra, Muhan Gao, Anqi Liu, Daniel Khashabi

2025-04-29

Summary

This paper talks about ICL CIPHERS, a new way to test how well large language models can actually learn from examples given to them on the spot, by making them solve secret codes called substitution ciphers.

What's the problem?

The problem is that it's hard to tell if language models are truly learning new patterns from the examples they're shown, or if they're just repeating things they've seen before, especially when it comes to in-context learning where the model is supposed to pick up clues during the conversation.

What's the solution?

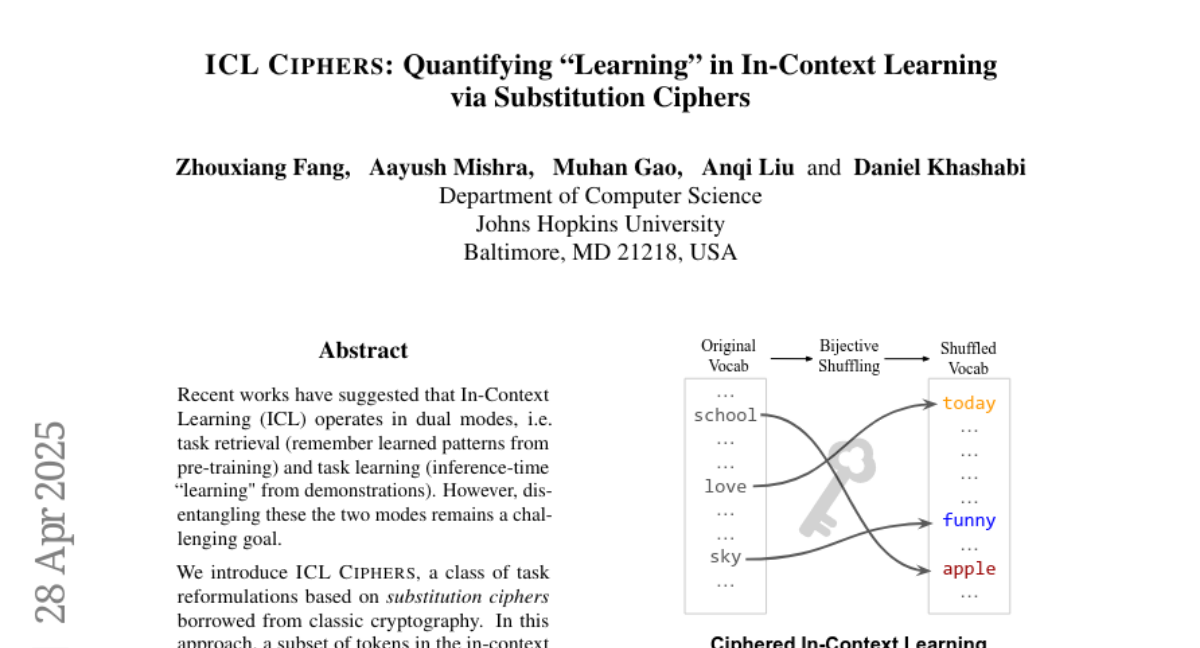

The researchers created a special test where the model has to break substitution ciphers, which are codes where each letter is swapped for another. This setup forces the model to find and use hidden patterns in real time, and the results show that models do better and show clearer signs of figuring out the code when the cipher is a simple one-to-one swap, compared to more confusing types of codes.

Why it matters?

This matters because it gives scientists a better way to measure and understand how much AI models can really learn from new information, which helps improve future models and makes them more useful for tasks that require real-time learning.

Abstract

ICL CIPHERS, a novel task reformulation using bijective substitution ciphers, helps quantify learning in In-Context Learning by challenging LLMs to decipher latent patterns, showing better performance and internal evidence of decryption compared to non-bijective mappings.