Improve Representation for Imbalanced Regression through Geometric Constraints

Zijian Dong, Yilei Wu, Chongyao Chen, Yingtian Zou, Yichi Zhang, Juan Helen Zhou

2025-03-05

Summary

This paper talks about a new way to make AI models better at handling imbalanced regression problems by using geometric shapes to organize data more evenly

What's the problem?

When AI models try to predict continuous values (like age or temperature) from imbalanced data, they often struggle with rare cases. Current methods designed for classification tasks don't work well for regression because they group data into separate clusters, which doesn't fit the smooth, continuous nature of regression problems

What's the solution?

The researchers created two new mathematical tools: 'enveloping loss' and 'homogeneity loss'. These tools help spread out the data representation evenly on the surface of a sphere and make sure the data points are smoothly connected. They combined these tools into a framework called Surrogate-driven Representation Learning (SRL) to improve how AI models understand and use imbalanced data for regression tasks

Why it matters?

This matters because it helps AI models make more accurate predictions for all types of data, even when some types are rare. This could lead to fairer and more reliable AI systems in areas like healthcare, where predicting things like drug dosages or patient outcomes accurately for all groups is crucial, regardless of how common or rare their cases might be

Abstract

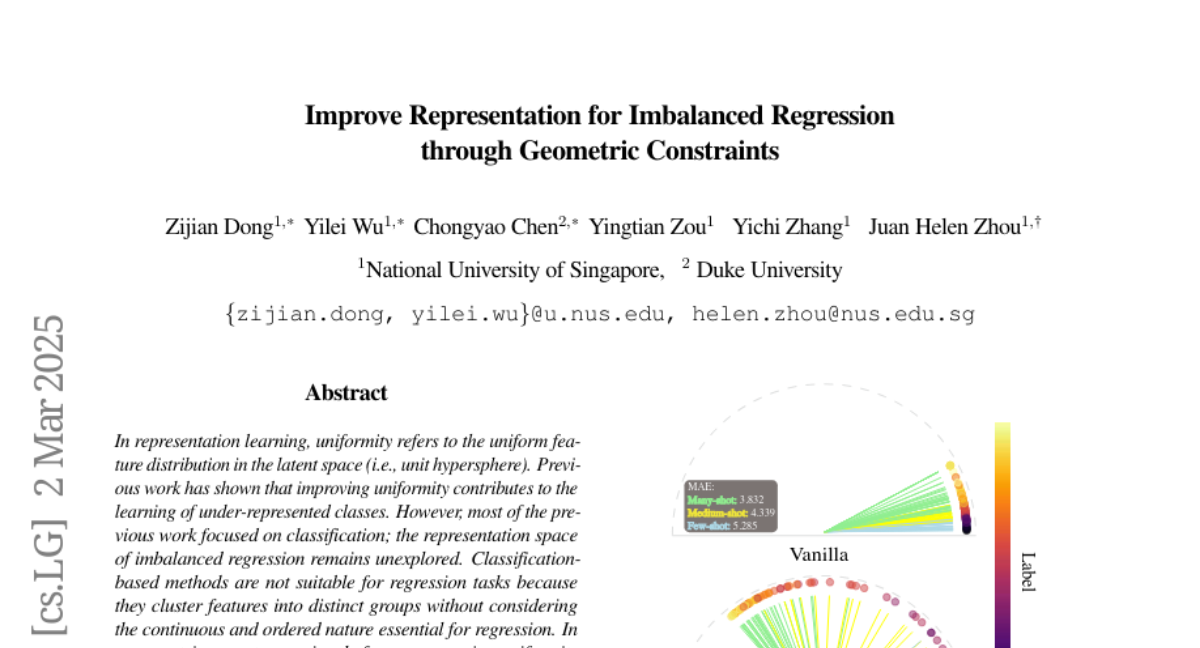

In representation learning, uniformity refers to the uniform feature distribution in the latent space (i.e., unit hypersphere). Previous work has shown that improving uniformity contributes to the learning of under-represented classes. However, most of the previous work focused on classification; the representation space of imbalanced regression remains unexplored. Classification-based methods are not suitable for regression tasks because they cluster features into distinct groups without considering the continuous and ordered nature essential for regression. In a geometric aspect, we uniquely focus on ensuring uniformity in the latent space for imbalanced regression through two key losses: enveloping and homogeneity. The enveloping loss encourages the induced trace to uniformly occupy the surface of a hypersphere, while the homogeneity loss ensures smoothness, with representations evenly spaced at consistent intervals. Our method integrates these geometric principles into the data representations via a Surrogate-driven Representation Learning (SRL) framework. Experiments with real-world regression and operator learning tasks highlight the importance of uniformity in imbalanced regression and validate the efficacy of our geometry-based loss functions.