INF-LLaVA: Dual-perspective Perception for High-Resolution Multimodal Large Language Model

Yiwei Ma, Zhibin Wang, Xiaoshuai Sun, Weihuang Lin, Qiang Zhou, Jiayi Ji, Rongrong Ji

2024-07-24

Summary

This paper presents INF-LLaVA, a new model designed to improve how multimodal large language models (MLLMs) process high-resolution images. It introduces innovative methods to capture both detailed local information and broader global context in image analysis.

What's the problem?

Many existing models struggle with processing high-resolution images because they often break them down into smaller pieces (sub-images) for easier handling. However, this method can lead to a loss of important overall context since the smaller pieces do not interact with each other. As a result, the models may miss out on understanding how different parts of an image relate to one another, which is crucial for tasks that require detailed visual perception.

What's the solution?

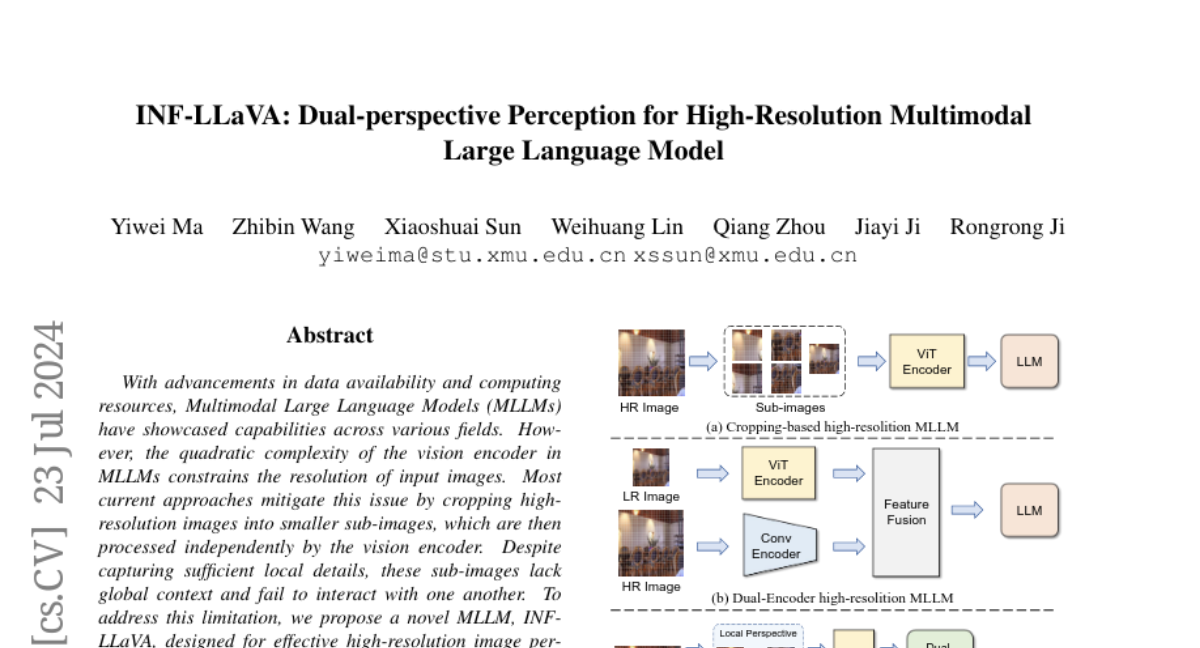

To solve these issues, INF-LLaVA uses two main components: the Dual-perspective Cropping Module (DCM) and the Dual-perspective Enhancement Module (DEM). The DCM ensures that each sub-image captures both local details and global context by cropping images in a way that maintains important information from both perspectives. The DEM then enhances the features from these perspectives, allowing the model to better integrate local and global information when analyzing images. This approach enables INF-LLaVA to effectively process high-resolution images while maintaining detail and context.

Why it matters?

This research is significant because it enhances the capabilities of AI systems in understanding complex images. By improving how models handle high-resolution visuals, INF-LLaVA can be applied in various fields such as medical imaging, surveillance, and any area where detailed image analysis is important. This advancement can lead to better performance in tasks like image captioning and visual question answering, ultimately making AI more effective in real-world applications.

Abstract

With advancements in data availability and computing resources, Multimodal Large Language Models (MLLMs) have showcased capabilities across various fields. However, the quadratic complexity of the vision encoder in MLLMs constrains the resolution of input images. Most current approaches mitigate this issue by cropping high-resolution images into smaller sub-images, which are then processed independently by the vision encoder. Despite capturing sufficient local details, these sub-images lack global context and fail to interact with one another. To address this limitation, we propose a novel MLLM, INF-LLaVA, designed for effective high-resolution image perception. INF-LLaVA incorporates two innovative components. First, we introduce a Dual-perspective Cropping Module (DCM), which ensures that each sub-image contains continuous details from a local perspective and comprehensive information from a global perspective. Second, we introduce Dual-perspective Enhancement Module (DEM) to enable the mutual enhancement of global and local features, allowing INF-LLaVA to effectively process high-resolution images by simultaneously capturing detailed local information and comprehensive global context. Extensive ablation studies validate the effectiveness of these components, and experiments on a diverse set of benchmarks demonstrate that INF-LLaVA outperforms existing MLLMs. Code and pretrained model are available at https://github.com/WeihuangLin/INF-LLaVA.