Inference-Time Scaling for Flow Models via Stochastic Generation and Rollover Budget Forcing

Jaihoon Kim, Taehoon Yoon, Jisung Hwang, Minhyuk Sung

2025-03-26

Summary

This paper is about making AI image generators work better by giving them more chances to refine their images during the generation process.

What's the problem?

AI image generators, specifically flow models, create images in a fixed way, making it hard to improve the image quality without retraining the entire model.

What's the solution?

The researchers developed a new technique that introduces randomness into the image generation process, allowing the AI to explore different possibilities and improve the final image. They also created a system to manage the AI's resources, ensuring it spends more time on the most important parts of the image.

Why it matters?

This work matters because it can lead to AI image generators that produce higher-quality images and can be easily adapted to different user preferences without needing to be completely retrained.

Abstract

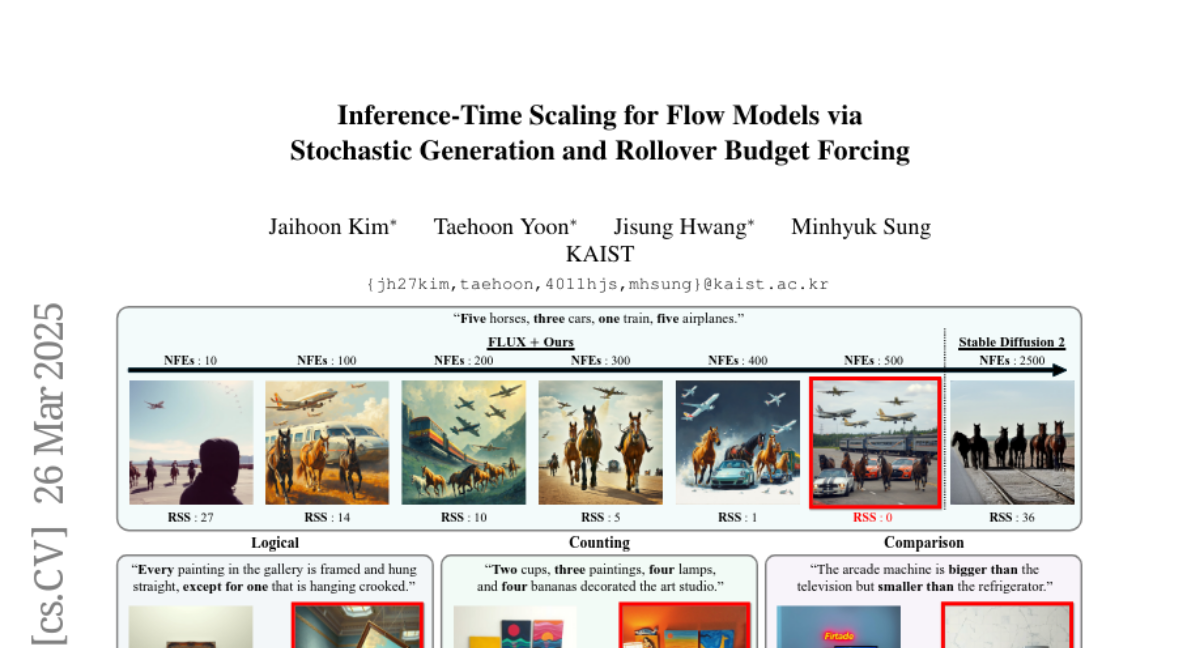

We propose an inference-time scaling approach for pretrained flow models. Recently, inference-time scaling has gained significant attention in LLMs and diffusion models, improving sample quality or better aligning outputs with user preferences by leveraging additional computation. For diffusion models, particle sampling has allowed more efficient scaling due to the stochasticity at intermediate denoising steps. On the contrary, while flow models have gained popularity as an alternative to diffusion models--offering faster generation and high-quality outputs in state-of-the-art image and video generative models--efficient inference-time scaling methods used for diffusion models cannot be directly applied due to their deterministic generative process. To enable efficient inference-time scaling for flow models, we propose three key ideas: 1) SDE-based generation, enabling particle sampling in flow models, 2) Interpolant conversion, broadening the search space and enhancing sample diversity, and 3) Rollover Budget Forcing (RBF), an adaptive allocation of computational resources across timesteps to maximize budget utilization. Our experiments show that SDE-based generation, particularly variance-preserving (VP) interpolant-based generation, improves the performance of particle sampling methods for inference-time scaling in flow models. Additionally, we demonstrate that RBF with VP-SDE achieves the best performance, outperforming all previous inference-time scaling approaches.