InfiGUI-R1: Advancing Multimodal GUI Agents from Reactive Actors to Deliberative Reasoners

Yuhang Liu, Pengxiang Li, Congkai Xie, Xavier Hu, Xiaotian Han, Shengyu Zhang, Hongxia Yang, Fei Wu

2025-04-22

Summary

This paper talks about InfiGUI-R1, a new system that helps AI agents get much better at using computer interfaces, like apps and websites, by teaching them to think ahead and reason through tasks instead of just reacting to what’s on the screen.

What's the problem?

The problem is that most AI agents working with graphical user interfaces (GUIs) only react to what they see, without really planning or understanding the bigger picture. This means they can get stuck or make mistakes on more complicated tasks that require thinking several steps ahead or understanding how different parts of the interface work together.

What's the solution?

The researchers developed InfiGUI-R1, which uses a reasoning-based approach to train AI agents. They use techniques like Spatial Reasoning Distillation and Reinforcement Learning to help these agents learn how to plan, reason, and make smarter decisions when interacting with GUIs, turning them from simple reactors into thoughtful problem solvers.

Why it matters?

This matters because it makes AI much more capable and reliable when helping people with software, leading to better virtual assistants, smarter automation, and more helpful tools for anyone using computers.

Abstract

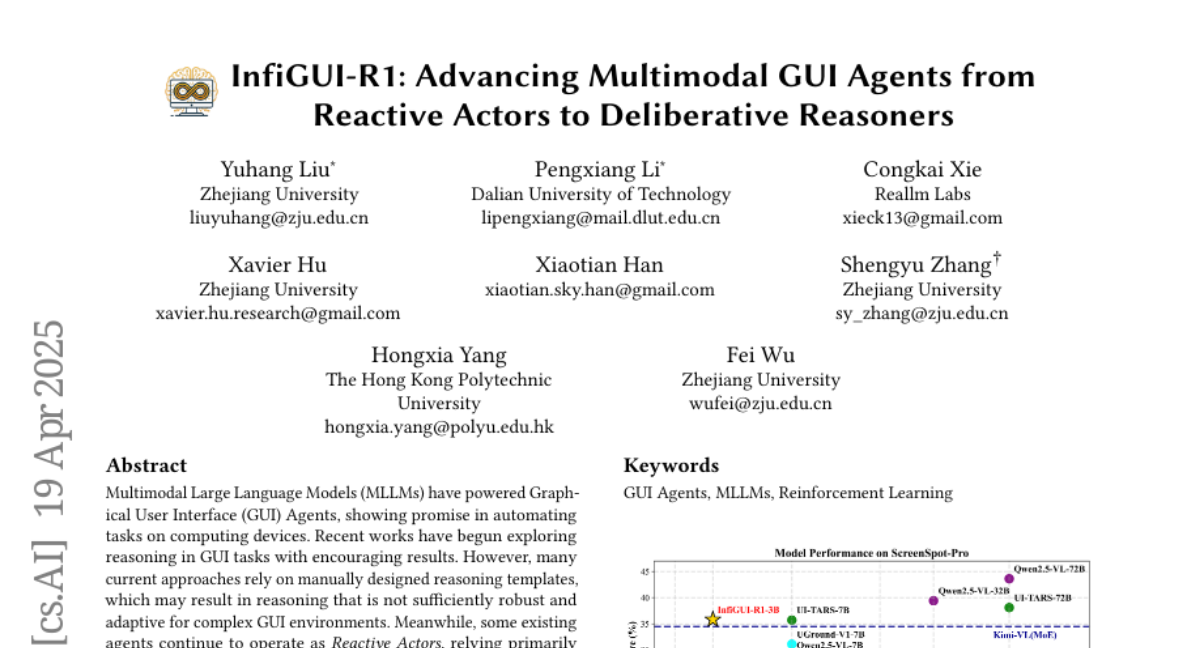

InfiGUI-R1 leverages a reasoning-centric framework to enhance MLLMs for GUI tasks by transitioning them from Reactive Actors to Deliberative Reasoners using Spatial Reasoning Distillation and Reinforcement Learning techniques.