Infini-gram mini: Exact n-gram Search at the Internet Scale with FM-Index

Hao Xu, Jiacheng Liu, Yejin Choi, Noah A. Smith, Hannaneh Hajishirzi

2025-06-17

Summary

This paper talks about Infini-gram mini, a powerful new system that makes it possible to search through massive amounts of text data from the Internet—petabytes worth—quickly and efficiently. It uses a special data structure called the FM-index, which both compresses the data and lets the system find exact matches of words or phrases without needing too much storage or memory. Infini-gram mini is much faster and requires less memory than older methods, allowing it to index huge text collections in a reasonable time using regular computer hardware.

What's the problem?

The problem is that language models get trained on enormous amounts of text taken from the Internet, and to understand or analyze this text well, people need tools that can search it exactly and quickly. However, traditional exact-match search systems use too much storage space and memory, especially when scaling up to Internet-sized datasets, making them impractical for very large collections of text.

What's the solution?

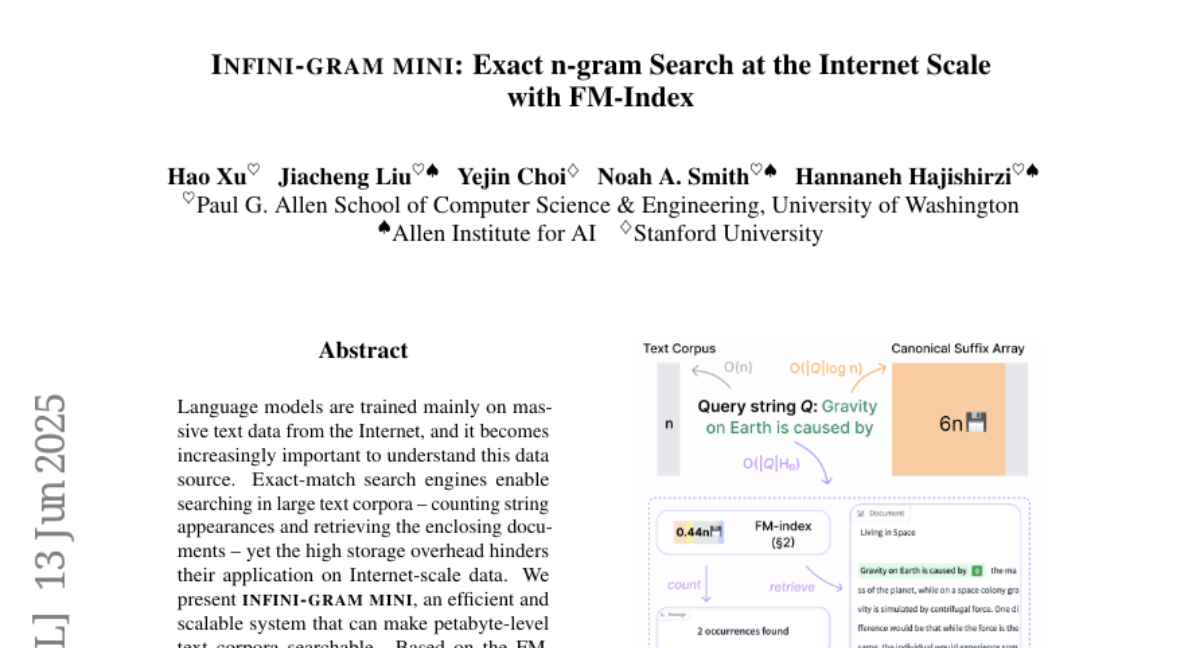

The solution was to build Infini-gram mini, which uses the FM-index data structure that cleverly compresses text while indexing it at the same time. This approach reduces the index size to less than half the original data and speeds up the process of creating the index by 18 times compared to older FM-index implementations. It also uses much less memory both when building the index and when searching it, making it feasible to work with petabytes of text even on a single 128-core CPU node or on many nodes in parallel. The system also supports a web interface and an API for general search queries, showing its practical usability.

Why it matters?

This matters because being able to search such huge text collections exactly and efficiently helps researchers and developers better understand the data used to train language models, catch problems like test dataset contamination, and perform large-scale data analyses. By making Internet-scale data searchable, Infini-gram mini supports more accurate evaluation and development of AI language technologies, ensuring they are trained on clean data and improving their overall reliability and trustworthiness.

Abstract

Language models are trained mainly on massive text data from the Internet, and it becomes increasingly important to understand this data source. Exact-match search engines enable searching in large text corpora -- counting string appearances and retrieving the enclosing documents -- yet the high storage overhead hinders their application on Internet-scale data. We present Infini-gram mini, an efficient and scalable system that can make petabyte-level text corpora searchable. Based on the FM-index data structure (Ferragina and Manzini, 2000), which simultaneously indexes and compresses text, our system creates indexes with size only 44% of the corpus. Infini-gram mini greatly improves upon the best existing implementation of FM-index in terms of indexing speed (18times) and memory use during both indexing (3.2times reduction) and querying (down to a negligible amount). We index 46TB of Internet text in 50 days with a single 128-core CPU node (or 19 hours if using 75 such nodes). We show one important use case of Infini-gram mini in a large-scale analysis of benchmark contamination. We find several core LM evaluation benchmarks to be heavily contaminated in Internet crawls (up to 40% in SQuAD), which could lead to overestimating the capabilities of language models if trained on such data. We host a benchmark contamination bulletin to share the contamination rate of many core and community-contributed benchmarks. We also release a web interface and an API endpoint to serve general search queries on Infini-gram mini indexes.