Instruct-CLIP: Improving Instruction-Guided Image Editing with Automated Data Refinement Using Contrastive Learning

Sherry X. Chen, Misha Sra, Pradeep Sen

2025-03-25

Summary

This paper is about improving how AI edits images based on text instructions.

What's the problem?

It's hard to train AI to edit images well using text instructions because it's difficult to create large, accurate training datasets.

What's the solution?

The researchers developed a new method called Instruct-CLIP that automatically refines existing training data to better align the images with the text instructions. This helps the AI learn to edit images more accurately.

Why it matters?

This work matters because it can lead to AI that is better at understanding and following instructions to edit images, which could be useful for things like photo editing software or creating personalized art.

Abstract

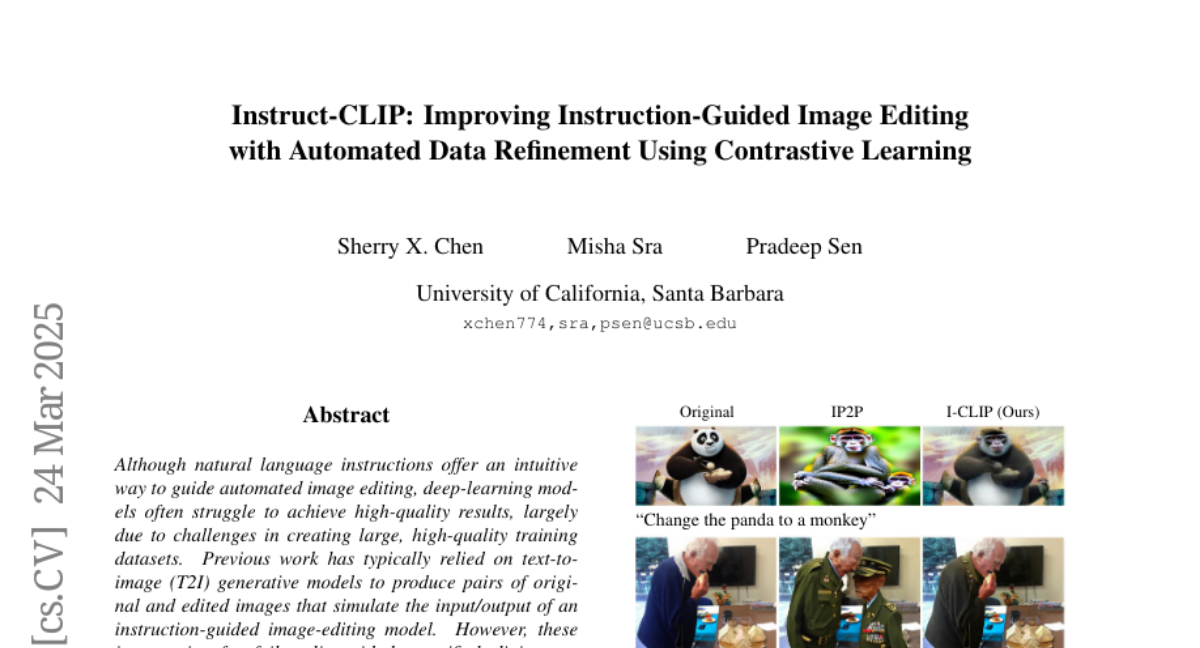

Although natural language instructions offer an intuitive way to guide automated image editing, deep-learning models often struggle to achieve high-quality results, largely due to challenges in creating large, high-quality training datasets. Previous work has typically relied on text-toimage (T2I) generative models to produce pairs of original and edited images that simulate the input/output of an instruction-guided image-editing model. However, these image pairs often fail to align with the specified edit instructions due to the limitations of T2I models, which negatively impacts models trained on such datasets. To address this, we present Instruct-CLIP, a self-supervised method that learns the semantic changes between original and edited images to refine and better align the instructions in existing datasets. Furthermore, we adapt Instruct-CLIP to handle noisy latent images and diffusion timesteps so that it can be used to train latent diffusion models (LDMs) [19] and efficiently enforce alignment between the edit instruction and the image changes in latent space at any step of the diffusion pipeline. We use Instruct-CLIP to correct the InstructPix2Pix dataset and get over 120K refined samples we then use to fine-tune their model, guided by our novel Instruct-CLIP-based loss function. The resulting model can produce edits that are more aligned with the given instructions. Our code and dataset are available at https://github.com/SherryXTChen/Instruct-CLIP.git.