InteractVLM: 3D Interaction Reasoning from 2D Foundational Models

Sai Kumar Dwivedi, Dimitrije Antić, Shashank Tripathi, Omid Taheri, Cordelia Schmid, Michael J. Black, Dimitrios Tzionas

2025-04-14

Summary

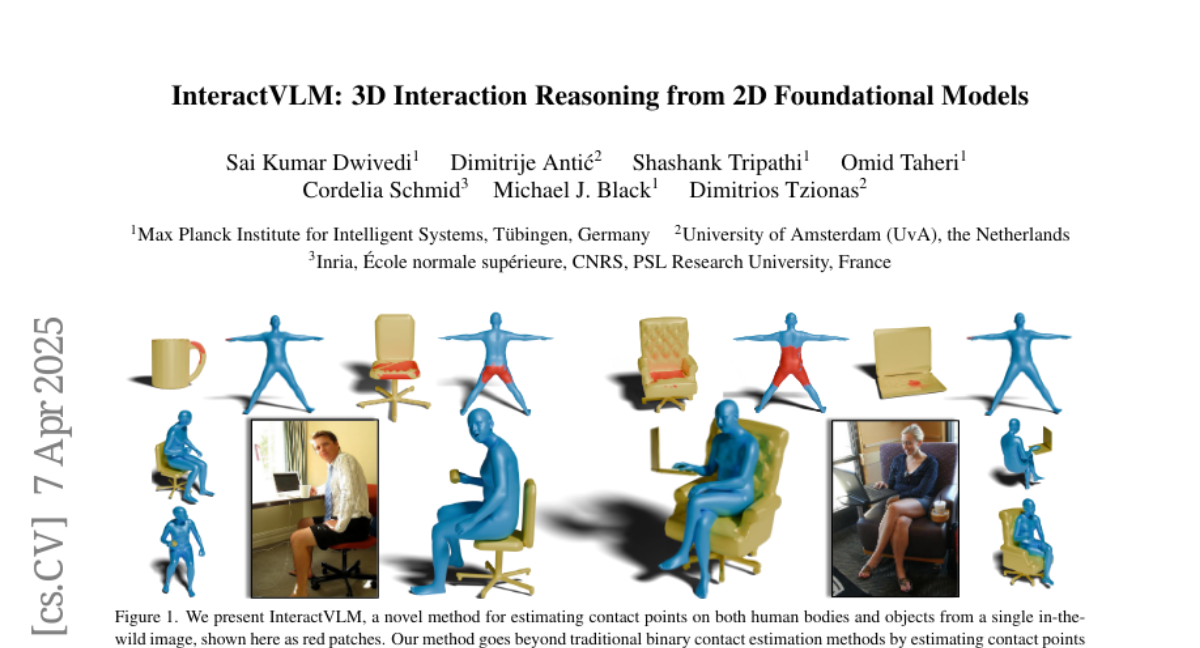

This paper talks about InteractVLM, a new AI method that can figure out exactly where a person and an object touch each other in 3D, just from a single photo. The system uses advanced vision-language models and a special module to turn 2D image information into 3D contact points, making it possible to recreate how people interact with objects in three dimensions.

What's the problem?

The problem is that understanding and predicting 3D contact between humans and objects from regular photos is really difficult. Most existing methods need expensive equipment or lots of manual work to label where contact happens, which makes it hard to use these techniques for lots of different images or situations.

What's the solution?

The researchers solved this by fine-tuning vision-language models with a small amount of 3D contact data and inventing a Render-Localize-Lift module. This module helps the AI take what it learns from 2D images and lift it into 3D space, so it can accurately predict where contact happens between a person and an object. The system also introduces a new way to predict not just if contact happens, but what kind of contact it is, based on the object involved.

Why it matters?

This work matters because it makes it much easier to study and recreate real-world interactions in 3D, using just photos instead of expensive motion-capture setups. This could help with animation, robotics, virtual reality, and any technology that needs to understand how people interact with things in the real world.

Abstract

InteractVLM estimates 3D contact points using fine-tuned Vision-Language Models and a Render-Localize-Lift module, enhancing 3D human-object joint reconstruction with semantic contact predictions.