KMM: Key Frame Mask Mamba for Extended Motion Generation

Zeyu Zhang, Hang Gao, Akide Liu, Qi Chen, Feng Chen, Yiran Wang, Danning Li, Hao Tang

2024-11-12

Summary

This paper presents KMM, a new method for generating extended human motion sequences using a model called Mamba, which improves how the model focuses on important actions and integrates different types of data.

What's the problem?

Generating realistic human motion in videos and games is challenging because existing models struggle with two main issues: they have limited memory capacity, which leads to forgetting important details over long sequences, and they find it hard to combine different types of information (like text and motion), often getting confused about directions or missing parts of instructions.

What's the solution?

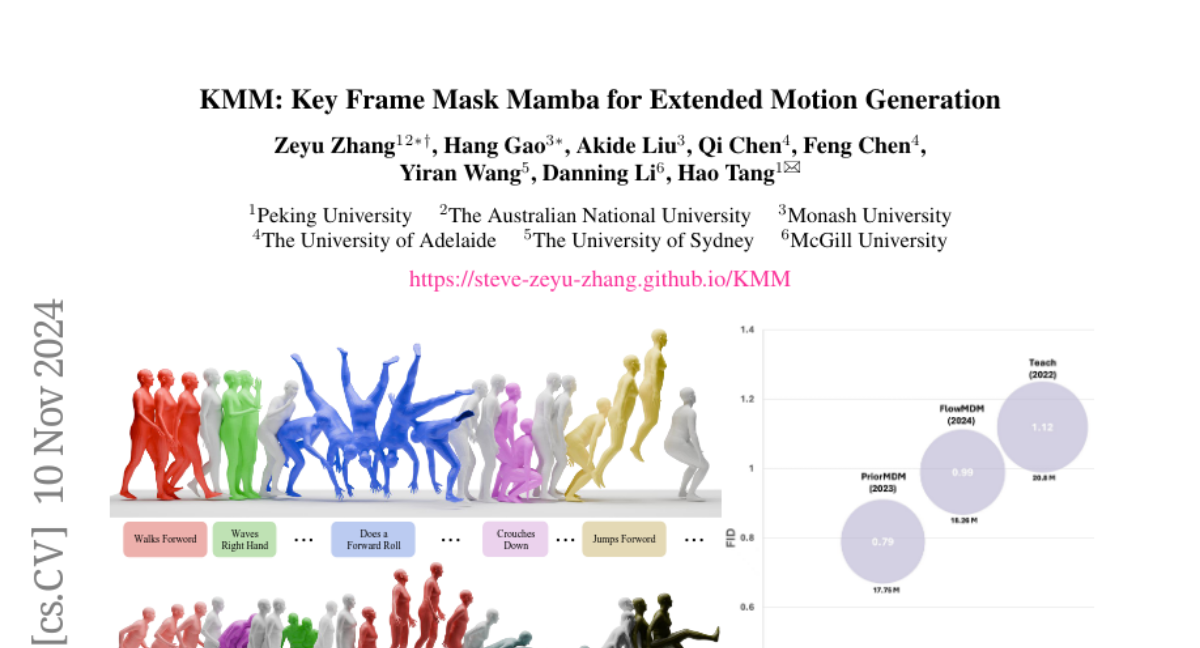

To solve these problems, the authors introduce the Key Frame Masking Modeling (KMM) architecture. This new method helps the Mamba model focus on key actions in motion sequences, preventing memory decay by strategically masking out less important frames. They also developed a contrastive learning approach to improve how the model combines different types of data and aligns them with text instructions. Their experiments showed that KMM significantly outperforms previous models, achieving better performance metrics while reducing the number of parameters needed.

Why it matters?

This research is important because it enhances the ability of AI models to generate realistic human movements, which can be applied in various fields like video game development, animation, and robotics. By improving how these models learn and remember important details, KMM can lead to more lifelike animations and better interactive experiences in digital media.

Abstract

Human motion generation is a cut-edge area of research in generative computer vision, with promising applications in video creation, game development, and robotic manipulation. The recent Mamba architecture shows promising results in efficiently modeling long and complex sequences, yet two significant challenges remain: Firstly, directly applying Mamba to extended motion generation is ineffective, as the limited capacity of the implicit memory leads to memory decay. Secondly, Mamba struggles with multimodal fusion compared to Transformers, and lack alignment with textual queries, often confusing directions (left or right) or omitting parts of longer text queries. To address these challenges, our paper presents three key contributions: Firstly, we introduce KMM, a novel architecture featuring Key frame Masking Modeling, designed to enhance Mamba's focus on key actions in motion segments. This approach addresses the memory decay problem and represents a pioneering method in customizing strategic frame-level masking in SSMs. Additionally, we designed a contrastive learning paradigm for addressing the multimodal fusion problem in Mamba and improving the motion-text alignment. Finally, we conducted extensive experiments on the go-to dataset, BABEL, achieving state-of-the-art performance with a reduction of more than 57% in FID and 70% parameters compared to previous state-of-the-art methods. See project website: https://steve-zeyu-zhang.github.io/KMM