KnowRL: Exploring Knowledgeable Reinforcement Learning for Factuality

Baochang Ren, Shuofei Qiao, Wenhao Yu, Huajun Chen, Ningyu Zhang

2025-06-25

Summary

This paper talks about KnowRL, a new method that helps large language models think more carefully and stick to facts by including a special reward during training based on checking facts against real knowledge.

What's the problem?

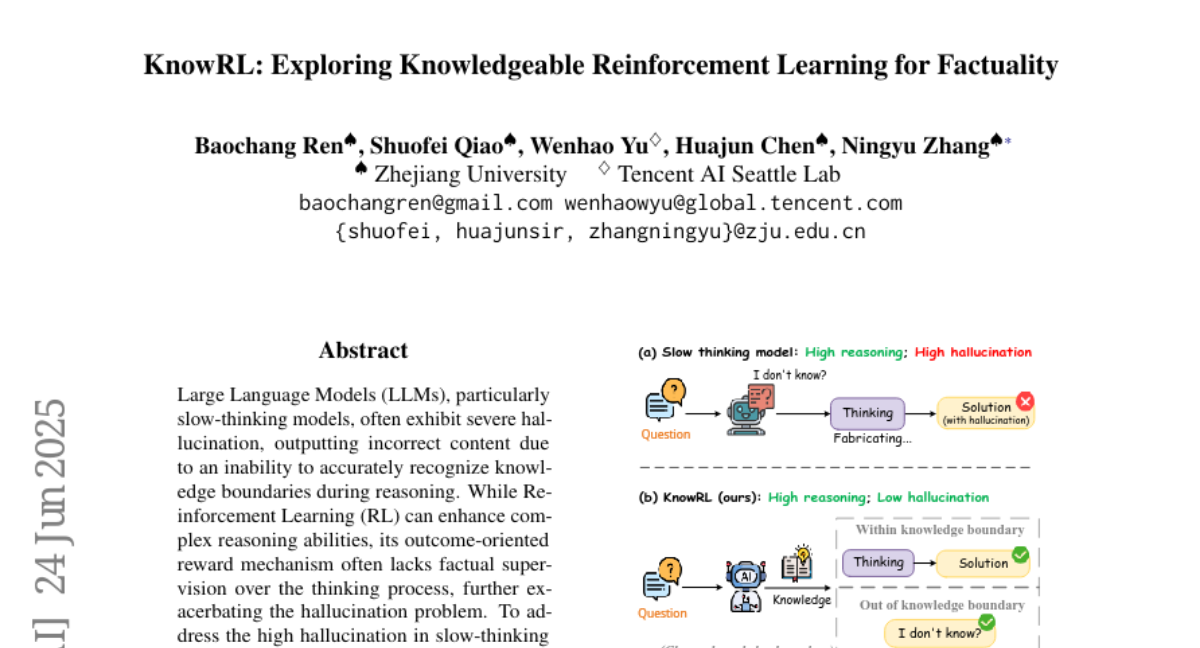

The problem is that large language models often make things up or hallucinate because they can’t always tell when their reasoning goes beyond what they really know, especially during slow, step-by-step thinking processes.

What's the solution?

The researchers developed KnowRL which adds a factuality reward during reinforcement learning training. This reward is based on verifying each reasoning step with information from trusted sources like Wikipedia, so the model learns to recognize when it is going out of known facts and to avoid guessing wrongly.

Why it matters?

This matters because it makes AI models more reliable and trustworthy by reducing the chance they invent wrong information, which is important for applications like education, research, and any setting where factual accuracy is crucial.

Abstract

KnowRL, a knowledge-enhanced reinforcement learning approach, reduces hallucinations in slow-thinking large language models by incorporating factuality rewards based on knowledge verification during training.