KUDA: Keypoints to Unify Dynamics Learning and Visual Prompting for Open-Vocabulary Robotic Manipulation

Zixian Liu, Mingtong Zhang, Yunzhu Li

2025-03-19

Summary

This paper presents KUDA, a new system that helps robots understand instructions and perform tasks by combining visual information with an understanding of how objects move.

What's the problem?

Many robots struggle to perform complex tasks because they don't understand how objects will react when they interact with them.

What's the solution?

KUDA uses keypoints on objects to understand both visual information and dynamics. It takes instructions and creates a plan to move the robot in the best way using a learned model.

Why it matters?

This work is important because it allows robots to perform complex tasks in the real world with more flexibility and understanding.

Abstract

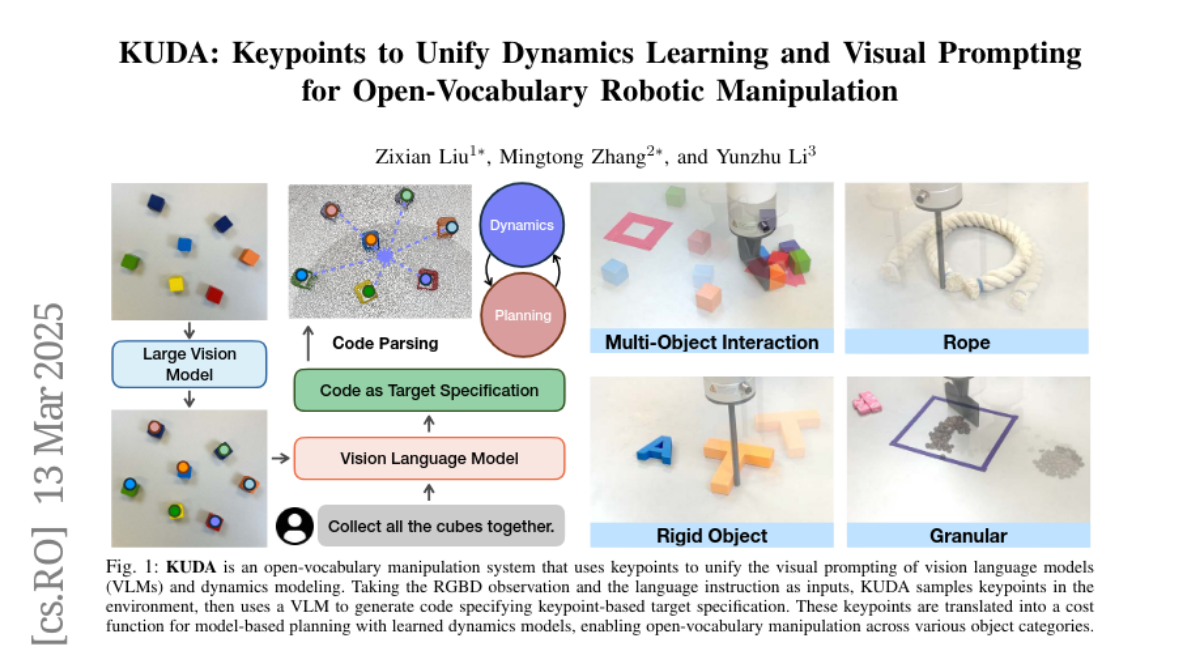

With the rapid advancement of large language models (LLMs) and vision-language models (VLMs), significant progress has been made in developing open-vocabulary robotic manipulation systems. However, many existing approaches overlook the importance of object dynamics, limiting their applicability to more complex, dynamic tasks. In this work, we introduce KUDA, an open-vocabulary manipulation system that integrates dynamics learning and visual prompting through keypoints, leveraging both VLMs and learning-based neural dynamics models. Our key insight is that a keypoint-based target specification is simultaneously interpretable by VLMs and can be efficiently translated into cost functions for model-based planning. Given language instructions and visual observations, KUDA first assigns keypoints to the RGB image and queries the VLM to generate target specifications. These abstract keypoint-based representations are then converted into cost functions, which are optimized using a learned dynamics model to produce robotic trajectories. We evaluate KUDA on a range of manipulation tasks, including free-form language instructions across diverse object categories, multi-object interactions, and deformable or granular objects, demonstrating the effectiveness of our framework. The project page is available at http://kuda-dynamics.github.io.