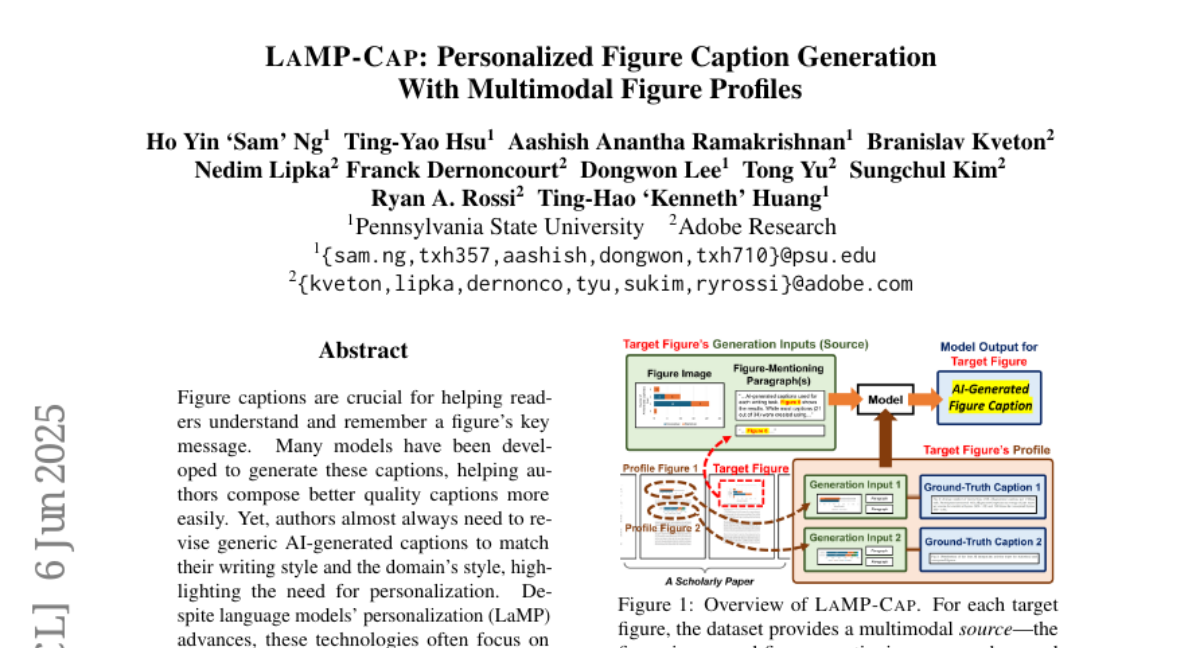

LaMP-Cap: Personalized Figure Caption Generation With Multimodal Figure Profiles

Ho Yin 'Sam' Ng, Ting-Yao Hsu, Aashish Anantha Ramakrishnan, Branislav Kveton, Nedim Lipka, Franck Dernoncourt, Dongwon Lee, Tong Yu, Sungchul Kim, Ryan A. Rossi, Ting-Hao 'Kenneth' Huang

2025-06-15

Summary

This paper talks about LaMP-Cap, a new approach that creates better, personalized captions for figures in documents by using different types of information from the figures and user profiles. It introduces a special dataset that helps AI learn how to make captions that fit different users' needs and preferences.

What's the problem?

The problem is that AI-generated captions for figures often miss important context or don’t match what specific users care about, making the captions less helpful or less clear. Existing captioning methods usually do not consider personal or detailed information about the figure or the person reading it.

What's the solution?

The solution was to gather multimodal figure profiles, meaning they collect different kinds of data about the figures such as images, text, and user preferences, and use this information to train AI models to generate personalized captions. By doing this, LaMP-Cap can produce captions that better describe the figures and suit the interests or background of different users.

Why it matters?

This matters because personalized and accurate captions help people understand figures more easily, which is important for learning, research, and communication. Improving AI-generated captions with personalized information can make reading scientific papers, reports, and other materials much clearer and more accessible for diverse audiences.

Abstract

LaMP-Cap introduces a dataset for personalized figure caption generation using multimodal profiles to improve the quality of AI-generated captions.