Language Models' Factuality Depends on the Language of Inquiry

Tushar Aggarwal, Kumar Tanmay, Ayush Agrawal, Kumar Ayush, Hamid Palangi, Paul Pu Liang

2025-02-27

Summary

This paper talks about how AI language models that work in multiple languages sometimes give different answers to the same factual questions depending on which language you ask in

What's the problem?

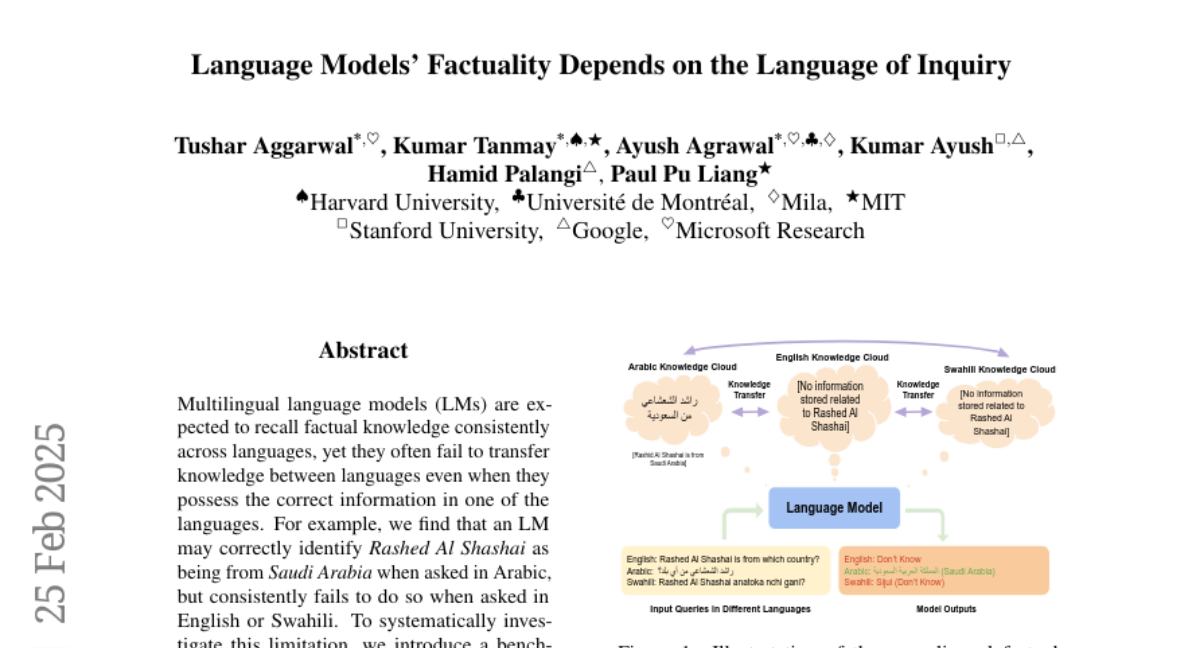

Even though these AI models are supposed to know facts and share them consistently no matter what language is used, they often fail to do this. For example, an AI might correctly say a person is from Saudi Arabia when asked in Arabic, but get it wrong when asked in English or Swahili. This inconsistency is a big problem for making reliable AI that works across languages

What's the solution?

The researchers created a test with 10,000 facts about countries in 13 different languages. They also made new ways to measure how well AI remembers facts and shares them across languages. They used these tools to test some of the best AI language models available

Why it matters?

This matters because as AI is used more around the world, we need it to give correct and consistent information no matter what language someone speaks. The study shows that current AI models have big problems with this, which could lead to misunderstandings or unfair treatment of people who speak different languages. By pointing out these issues and providing tools to test for them, the researchers are helping make AI better and more fair for everyone

Abstract

Multilingual language models (LMs) are expected to recall factual knowledge consistently across languages, yet they often fail to transfer knowledge between languages even when they possess the correct information in one of the languages. For example, we find that an LM may correctly identify Rashed Al Shashai as being from Saudi Arabia when asked in Arabic, but consistently fails to do so when asked in English or Swahili. To systematically investigate this limitation, we introduce a benchmark of 10,000 country-related facts across 13 languages and propose three novel metrics: Factual Recall Score, Knowledge Transferability Score, and Cross-Lingual Factual Knowledge Transferability Score-to quantify factual recall and knowledge transferability in LMs across different languages. Our results reveal fundamental weaknesses in today's state-of-the-art LMs, particularly in cross-lingual generalization where models fail to transfer knowledge effectively across different languages, leading to inconsistent performance sensitive to the language used. Our findings emphasize the need for LMs to recognize language-specific factual reliability and leverage the most trustworthy information across languages. We release our benchmark and evaluation framework to drive future research in multilingual knowledge transfer.