Large Language Models are Locally Linear Mappings

James R. Golden

2025-06-02

Summary

This paper talks about how large language models, which are advanced AI systems that generate text, can actually be understood as if they work in a mostly straight-line or linear way when making predictions, at least in small sections.

What's the problem?

The problem is that people often see these language models as mysterious black boxes, making it hard to figure out how they really work or why they make certain predictions.

What's the solution?

The researchers discovered that you can use simple math, treating the model like a linear system in small parts, to get a clearer picture of how it handles information and represents meaning, all without changing how the model works or what it predicts.

Why it matters?

This is important because it helps scientists and engineers better understand, trust, and possibly improve these powerful AI systems, making them more transparent and reliable for everyone who uses them.

Abstract

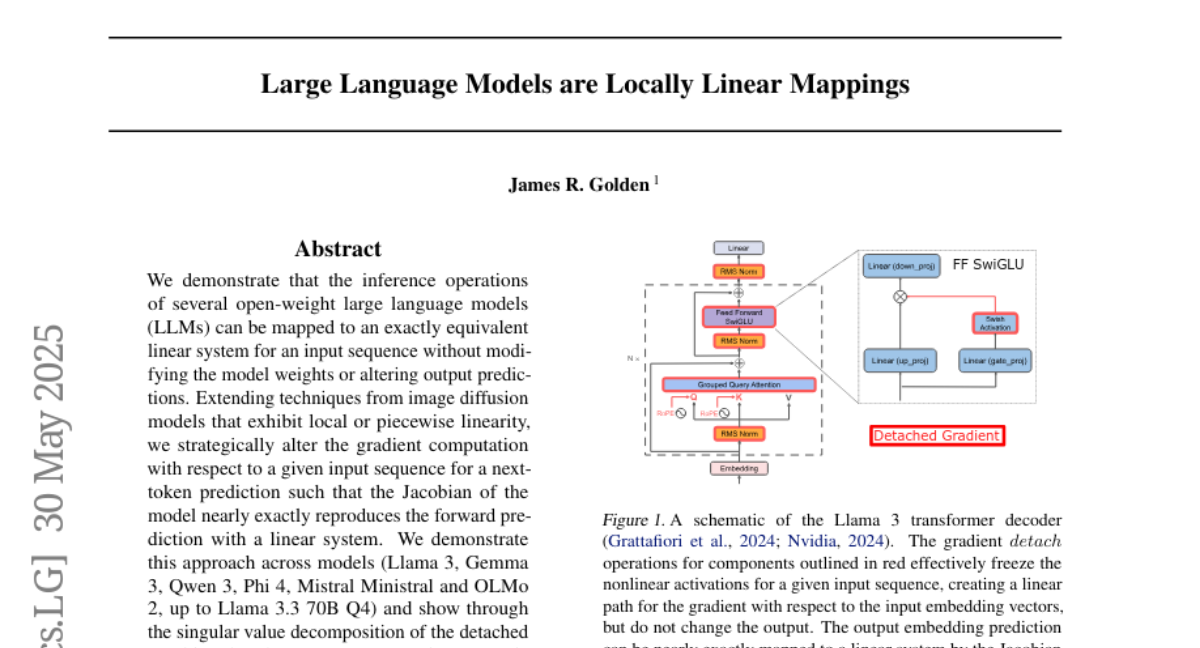

LLMs can be approximated as linear systems for inference, offering insights into their internal representations and semantic structures without altering the models or their predictions.