Large Language Models Implicitly Learn to See and Hear Just By Reading

Prateek Verma, Mert Pilanci

2025-05-26

Summary

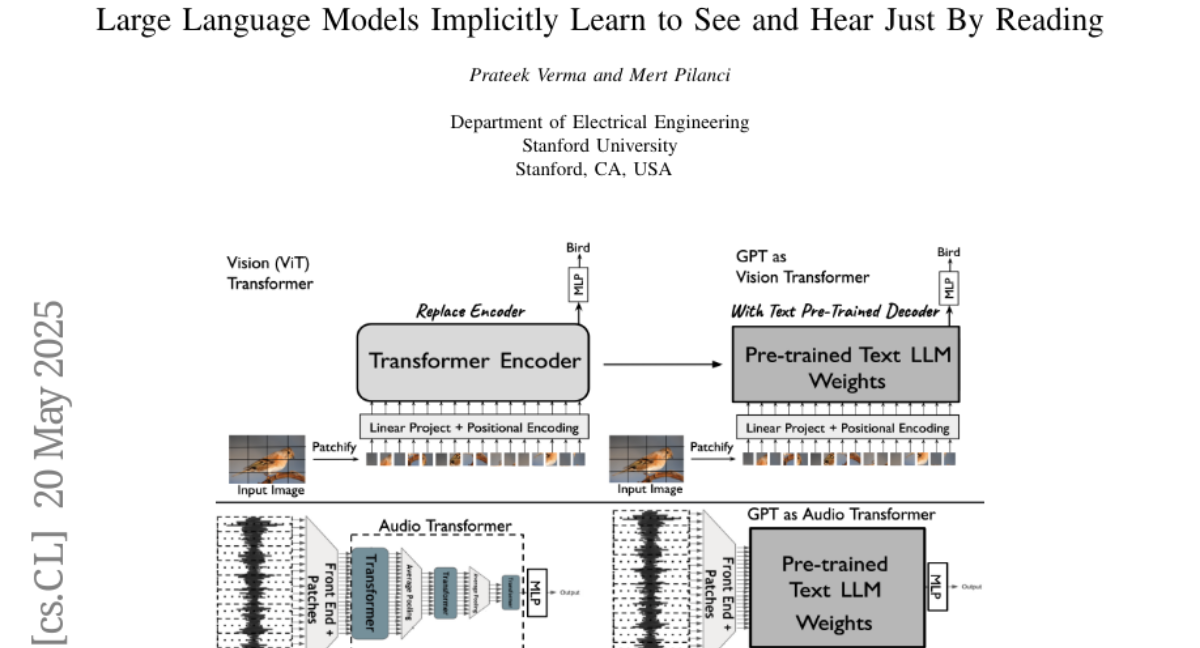

This paper talks about how large language models, which are usually just trained to read and understand text, can actually pick up skills for recognizing images and sounds even if they've never been directly trained on those types of data.

What's the problem?

The problem is that most people think you need to train AI models separately on images, audio, and text for them to understand each kind of information, which takes a lot of extra data and effort.

What's the solution?

The researchers discovered that when these language models are trained on huge amounts of text, they somehow develop the ability to handle tasks involving images and sounds, like classifying them, without needing extra training or adjustments.

Why it matters?

This is important because it shows that language models are more powerful and flexible than we thought, which could make it much easier and faster to build AI systems that can understand the world in more human-like ways.

Abstract

Auto-regressive text LLMs trained on text can develop internal capabilities for understanding images and audio, enabling them to perform classification tasks across different modalities without fine-tuning.