Large-scale Pre-training for Grounded Video Caption Generation

Evangelos Kazakos, Cordelia Schmid, Josef Sivic

2025-03-17

Summary

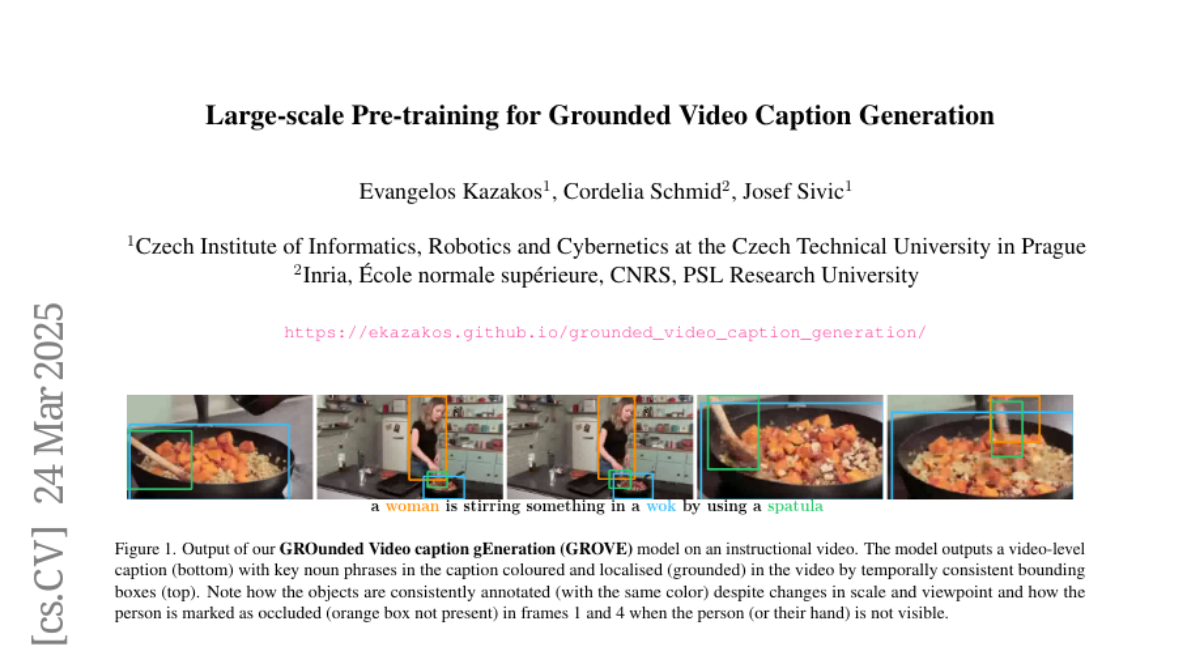

This paper presents a new method for creating captions for videos that also identifies and labels the objects in the video with boxes that follow them over time.

What's the problem?

It's challenging to automatically generate captions for videos that accurately describe the objects and their actions, especially if you want to pinpoint the objects' locations throughout the video.

What's the solution?

The researchers developed a system that automatically labels objects in videos and creates captions that describe those objects. They also created a large dataset to train their system, and a smaller, high-quality dataset to test it.

Why it matters?

This work matters because it advances the field of video understanding, making it easier to automatically analyze and describe the content of videos, which has applications in areas like video search and content creation.

Abstract

We propose a novel approach for captioning and object grounding in video, where the objects in the caption are grounded in the video via temporally dense bounding boxes. We introduce the following contributions. First, we present a large-scale automatic annotation method that aggregates captions grounded with bounding boxes across individual frames into temporally dense and consistent bounding box annotations. We apply this approach on the HowTo100M dataset to construct a large-scale pre-training dataset, named HowToGround1M. We also introduce a Grounded Video Caption Generation model, dubbed GROVE, and pre-train the model on HowToGround1M. Second, we introduce a new dataset, called iGround, of 3500 videos with manually annotated captions and dense spatio-temporally grounded bounding boxes. This allows us to measure progress on this challenging problem, as well as to fine-tune our model on this small-scale but high-quality data. Third, we demonstrate that our approach achieves state-of-the-art results on the proposed iGround dataset compared to a number of baselines, as well as on the VidSTG and ActivityNet-Entities datasets. We perform extensive ablations that demonstrate the importance of pre-training using our automatically annotated HowToGround1M dataset followed by fine-tuning on the manually annotated iGround dataset and validate the key technical contributions of our model.