LazyReview A Dataset for Uncovering Lazy Thinking in NLP Peer Reviews

Sukannya Purkayastha, Zhuang Li, Anne Lauscher, Lizhen Qu, Iryna Gurevych

2025-04-16

Summary

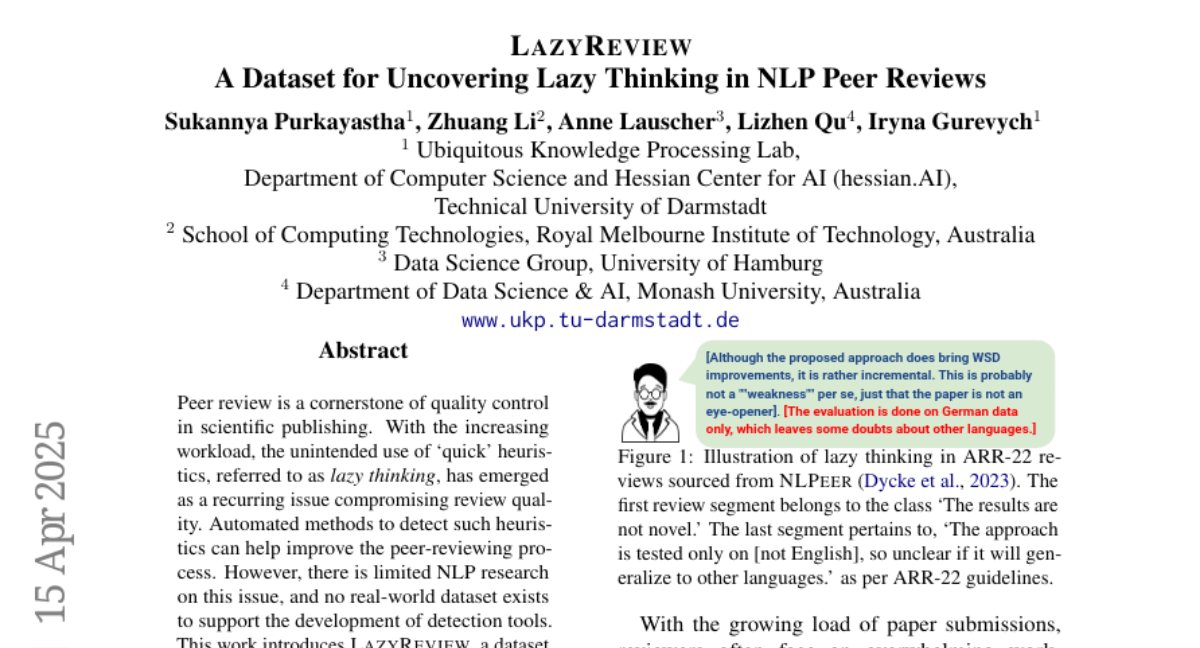

This paper talks about LazyReview, a new dataset created to help spot 'lazy thinking' in peer reviews, which is when reviewers use quick, shallow reasoning instead of carefully analyzing the work.

What's the problem?

The problem is that peer reviews are supposed to ensure quality in scientific publishing, but because reviewers often have a heavy workload, they sometimes rely on shortcuts or superficial judgments. This lazy thinking can lower the quality of reviews and let weak research slip through.

What's the solution?

The researchers built the LazyReview dataset by collecting and labeling real peer review sentences with different types of lazy thinking. They found that current AI models have trouble spotting these lazy patterns without extra training. By fine-tuning language models with instructions using this dataset, the models got much better at detecting lazy thinking. They also showed that when reviewers used feedback based on these annotations, their reviews became more detailed and helpful.

Why it matters?

This matters because it can lead to better peer reviews and higher standards in scientific research. With tools and training based on LazyReview, both humans and AI can help catch shallow thinking, making the review process fairer and more reliable.

Abstract

A dataset called LazyReview is introduced to improve the detection of lazy thinking in peer reviews, showing that instruction-based fine-tuning of LLMs enhances their ability to identify such heuristics.