Learning Few-Step Diffusion Models by Trajectory Distribution Matching

Yihong Luo, Tianyang Hu, Jiacheng Sun, Yujun Cai, Jing Tang

2025-03-17

Summary

This collection of papers explores various advancements and challenges in AI, spanning areas like image and video generation, language models, robotics, and more.

What's the problem?

The problems addressed include improving the efficiency and quality of AI-generated content, enhancing the reasoning abilities of AI models, mitigating biases and safety risks, and enabling AI to better interact with the real world.

What's the solution?

The solutions involve developing new models, training techniques, benchmarks, and evaluation methods. These include innovations in diffusion models, transformers, reinforcement learning, and multimodal learning. Specific solutions focus on improving image compression (PerCoV2), generating consistent videos (CINEMA, Long Context Tuning), enabling robots to navigate and manipulate objects (UniGoal, adversarial data collection), and mitigating toxicity in online discussions (Silent Is Not Actually Silent).

Why it matters?

These advancements are important because they push the boundaries of AI capabilities, making AI more powerful, reliable, and beneficial for various applications. They also address critical challenges related to safety, fairness, and transparency, ensuring that AI is developed and deployed responsibly.

Abstract

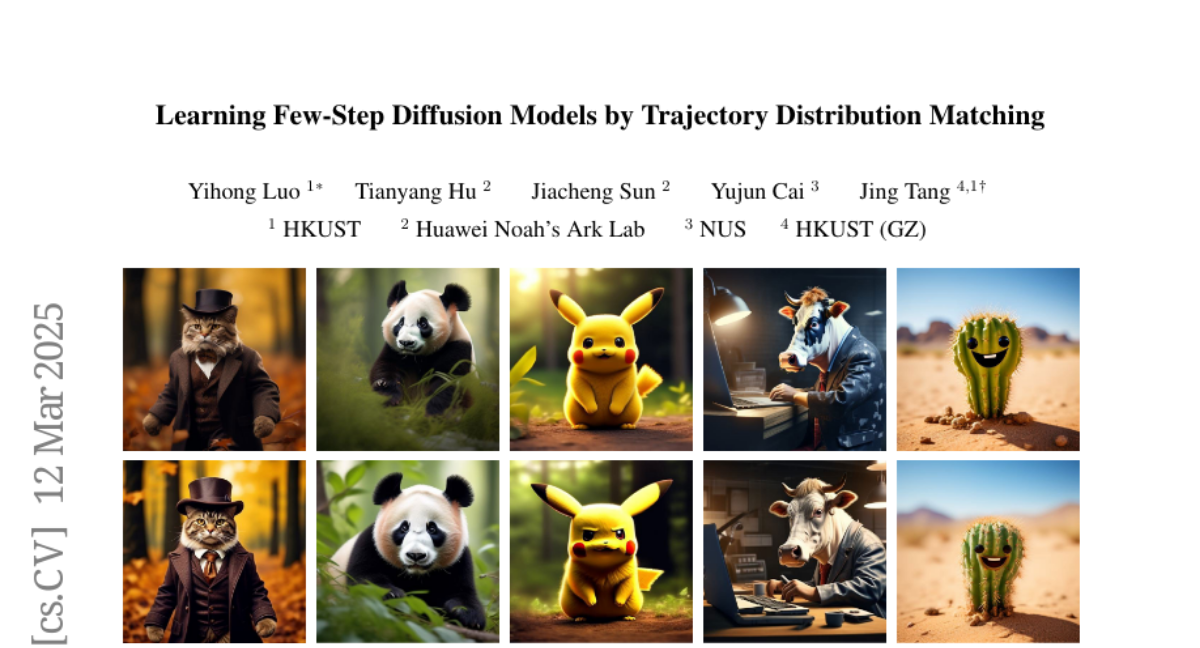

Accelerating diffusion model sampling is crucial for efficient AIGC deployment. While diffusion distillation methods -- based on distribution matching and trajectory matching -- reduce sampling to as few as one step, they fall short on complex tasks like text-to-image generation. Few-step generation offers a better balance between speed and quality, but existing approaches face a persistent trade-off: distribution matching lacks flexibility for multi-step sampling, while trajectory matching often yields suboptimal image quality. To bridge this gap, we propose learning few-step diffusion models by Trajectory Distribution Matching (TDM), a unified distillation paradigm that combines the strengths of distribution and trajectory matching. Our method introduces a data-free score distillation objective, aligning the student's trajectory with the teacher's at the distribution level. Further, we develop a sampling-steps-aware objective that decouples learning targets across different steps, enabling more adjustable sampling. This approach supports both deterministic sampling for superior image quality and flexible multi-step adaptation, achieving state-of-the-art performance with remarkable efficiency. Our model, TDM, outperforms existing methods on various backbones, such as SDXL and PixArt-alpha, delivering superior quality and significantly reduced training costs. In particular, our method distills PixArt-alpha into a 4-step generator that outperforms its teacher on real user preference at 1024 resolution. This is accomplished with 500 iterations and 2 A800 hours -- a mere 0.01% of the teacher's training cost. In addition, our proposed TDM can be extended to accelerate text-to-video diffusion. Notably, TDM can outperform its teacher model (CogVideoX-2B) by using only 4 NFE on VBench, improving the total score from 80.91 to 81.65. Project page: https://tdm-t2x.github.io/