Learning to Highlight Audio by Watching Movies

Chao Huang, Ruohan Gao, J. M. F. Tsang, Jan Kurcius, Cagdas Bilen, Chenliang Xu, Anurag Kumar, Sanjeel Parekh

2025-05-21

Summary

This paper talks about a new AI system that learns how to make certain sounds stand out in movies by paying attention to what's happening on the screen, creating a better experience for viewers.

What's the problem?

It's hard for computers to know which sounds should be more noticeable in a movie scene, especially since the important sounds often depend on the visuals, like footsteps in a dark hallway or a phone ringing during a quiet moment.

What's the solution?

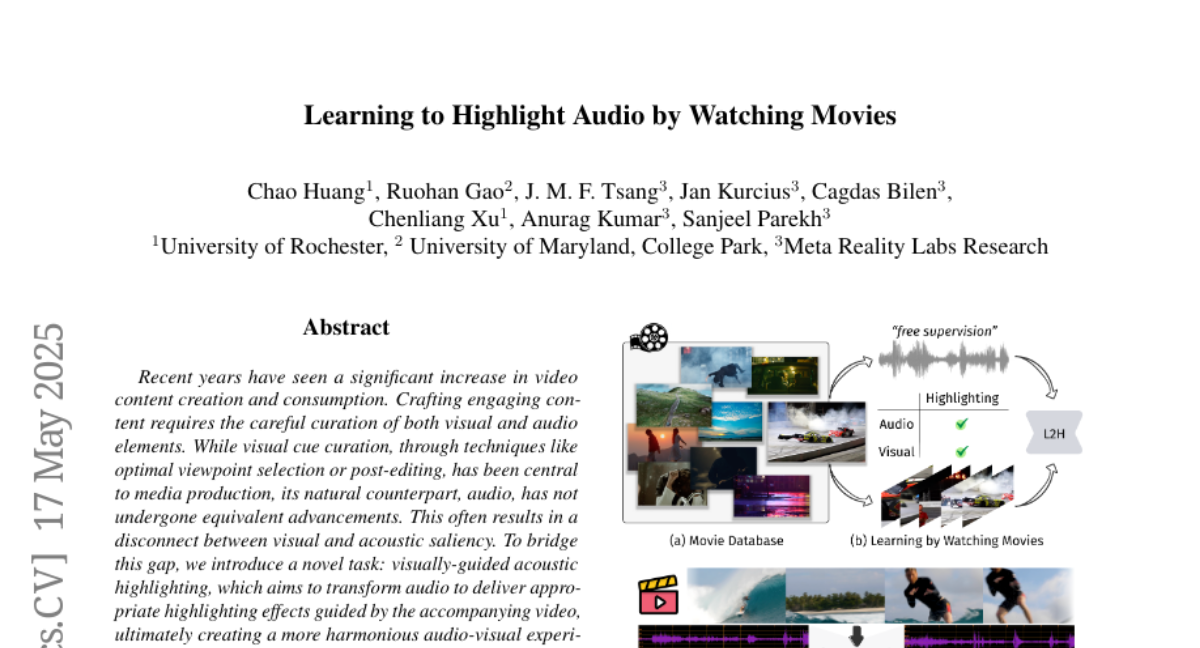

The researchers built a special AI framework that looks at both the video and audio at the same time and trained it using a new dataset made from movies, along with extra data they generated to help the system learn which sounds to highlight based on what it sees.

Why it matters?

This matters because it can make movies and videos more engaging and easier to understand, especially for people who rely on sound cues, like those with visual impairments, or anyone who wants a richer audio-visual experience.

Abstract

A transformer-based multimodal framework addresses the challenge of visually-guided acoustic highlighting, creating harmonious audio-visual experiences using a newly introduced dataset and pseudo-data generation process.